Jin Zhu (jinz3@andrew.cmu.edu), Zhongyi Tong (ztong@andrew.cmu.edu)

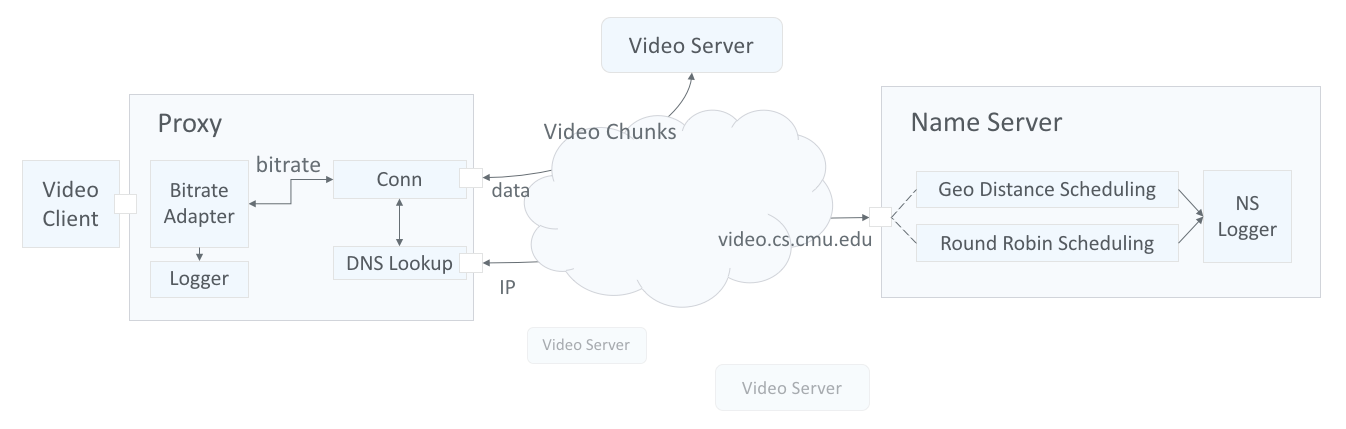

This project consists of two systems:

- Video Client Proxy, which requests video chunks and provides bitrate adaption for video streaming;

- Name Server, which enables loading balancing of video server's CDNs.

Clients trying to stream a video first issue a DNS query to resolve the service’s domain name to an IP address for one of the CDN’s content servers. The CDN’s authoritative name server selects the “best” content server for each particular client based on (1) the client’s geographic location (learnt from client's IP address and OSPF LSAs) and (2) current load on the content servers (approximated using round robin scheduling).

Once the client has the IP address for one of the content servers, it begins requesting chunks of the video the user requested. The video is encoded at multiple bitrates; as the client player receives video data, it calculates the throughput of the transfer and requests the highest bitrate the connection can support.

-

Proxy Container (proxy.c): The container initializes all modules in the Proxy and resolves video server's IP address at proxy start up. Then it runs on a certain port and dispatches incoming requests concurrently from one video client.

Design tradeoff: Querying a best CDN for every video chunk request may improve load balancing. However, it also brings undesirable instability since the throughput may change significantly if the video server is being switched frequently. As a result, the CDN is resolved once at proxy start, and fixed for the same client.

-

Connection (conn.c): Handles external connections to request video chunks from servers.

-

Bitrate Adapter (bitrate_adapter.c): The adapter estimates the throughput between the proxy and video server. Based on the throughput, it determines the highest quality (bitrate) video chunk the proxy should request.

-

Throughput estimation: The proxy notifys the adapter when a request is ready to send and when a response is completely received. The adapter will maintain a state for each en route request until it is received. The interval, chunk size is used to update the throughput. To smooth the throughput estimation, an exponentially-weighted moving average (EWMA) is used: $$ T_{current} = αT{new} + (1 − α)T{current} $$

-

Bitrate selection: The adapter requests a list of all bitrates the video server supports before video chunks are requested. It allows a bitrate if the average throughput is at least 1.5 times the bitrate. The adapter will replace the bitrate in the request.

-

-

DNS Lookup (mydns.c): The DNS Resolver lookups and resolves video CDN's IP.

-

Logger (logger.c): Logs proxy traffics like bitrates, timestamp, etc.

- NS Countainer (name_server.c): The container initializes the selected scheduling algorithm by reading all available CDNs from a server list. If Geographic Distance schduling is selected, it will build a directed acyclic graph for the network from LSAs.

- Round Robin Scheduling (round_robin.c): Builds a cyclic linked list for all servers. Return next server for each query.

- Geographic Distance Scheduling (lsa.c): Builds an adjacency matrix for all nodes in the network using dijkstra algorithm. Return the server with shorted path for each query.

- NS Logger (ns_logger.c): Logs resolved CDNs for each query.

Utility Functions (utils.c/ns_util.c): Useful helper functions.

See writeup.pdf.