A quick demo using a hexagon grid with a centroid fill of points sized using raster values from the image layer.

| # this script goes through a list of files from Excel "data from folder" | |

| # and computes a hash of the file contents (to be used for deduplication) | |

| import csv | |

| import os | |

| import hashlib | |

| import time | |

| # columns: Name,Extension,Date accessed,Date modified,Date created,Folder Path,Hash |

The same image (an illustrated book cover) was scanned on the flatbed scanner (EPSON WF-2630) three times at 300dpi.

Analysis of the variation across the three scans:

| band | min | max | mean | std dev |

|---|---|---|---|---|

| red | 0 | 36 | 4.47 | 2.59 |

| green | 0 | 36 | 4.41 | 2.68 |

| blue | 0 | 37 | 4.72 | 2.95 |

| # this script goes through a directory of tif files and a shapefile of cities | |

| # and reports which city points are contained within each tif | |

| import os | |

| import fiona | |

| import rasterio | |

| import shapely | |

| from pyproj import Transformer | |

| tifpath = "path/to/tiffs" |

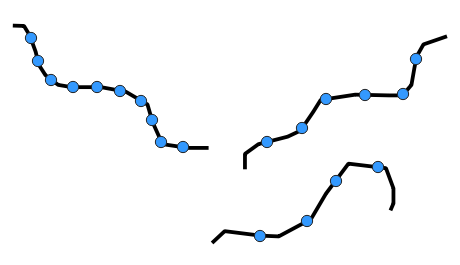

| The limiting factor in terms of the speed of the Iso-areas tool seems | |

| to be the number of starting points. I've tried a number of | |

| variations, trying to reduce the number of points while retaining | |

| enough points that the analysis is still accurate. After several | |

| failed attempts, I think I've come up with a workflow that ends up | |

| keeping just the points along the park perimeters that end up being | |

| the closest points to the road network. Here's the general workflow, | |

| assuming we are starting with 2 layers, "streets" and "parks". I'd | |

| suggest creating temporary outputs for each of these steps, and then | |

| make the final result permanent. |

Realistically, especially when considering the inherent noise in the original image, I'd settle for lossy compression with COMPRESS=JPEG JPEG_QUALITY=90, which could reduce file size fairly quickly to 16% of the original.

But if lossless compression is a hard requirement, I'd probably go with COMPRESS=LZW PREDICTOR=2. However, I would want to verify that any downstream tools would support this sort of compression.

UPDATE: When saving with JPEG compression, further speed and size improvements are gained by adding PHOTOMETRIC=YCBCR, which uses a different color space that has even better compression. I've added new rows to the table for YCBCR, as well as .jp2 formats.

| <html> | |

| <head> | |

| <title>Cornell Theses and Dissertations by Academic Discipline</title> | |

| <meta http-equiv='Content-type' content='text/html; charset=UTF-8'> | |

| <meta name='author' content='Keith Jenkins'> | |

| <meta name='dateModified' content='2022-01-11'> | |

| <style> | |

| body { font-family: sans-serif } | |

| h1 { text-align:center ; background: #b31b1b ; color: #fff ; padding: 0.5em ; margin: 0 } | |

| td { white-space: nowrap } |

See the video demo at https://youtu.be/t-pb5-1Q_Qg

The files here were used to convert UrbanWatch from RGB images to numeric rasters with simple landcover values 0-9, as follows:

| Value | Category | Color |

|---|---|---|

| 0 | No Data | Black |

| 1 | Building | Red |

See the video demo at https://youtu.be/mdYnBFYqXpg

The files here can be used to convert UrbanWatch rasters from RGB images to numeric rasters with simple landcover values 0-9, as follows:

| Value | Category | Color |

|---|---|---|

| 0 | No Data | Black |

| 1 | Building | Red |