- Labels Detection

- Faces Detection

- Faces Comparison

- Faces Indexing

- Faces Search

-

-

Save alexcasalboni/0f21a1889f09760f8981b643326730ff to your computer and use it in GitHub Desktop.

| import boto3 | |

| BUCKET = "amazon-rekognition" | |

| KEY = "test.jpg" | |

| def detect_labels(bucket, key, max_labels=10, min_confidence=90, region="eu-west-1"): | |

| rekognition = boto3.client("rekognition", region) | |

| response = rekognition.detect_labels( | |

| Image={ | |

| "S3Object": { | |

| "Bucket": bucket, | |

| "Name": key, | |

| } | |

| }, | |

| MaxLabels=max_labels, | |

| MinConfidence=min_confidence, | |

| ) | |

| return response['Labels'] | |

| for label in detect_labels(BUCKET, KEY): | |

| print "{Name} - {Confidence}%".format(**label) | |

| """ | |

| Expected output: | |

| People - 99.2436447144% | |

| Person - 99.2436447144% | |

| Human - 99.2351226807% | |

| Clothing - 96.7797698975% | |

| Suit - 96.7797698975% | |

| """ |

| import boto3 | |

| BUCKET = "amazon-rekognition" | |

| KEY = "test.jpg" | |

| FEATURES_BLACKLIST = ("Landmarks", "Emotions", "Pose", "Quality", "BoundingBox", "Confidence") | |

| def detect_faces(bucket, key, attributes=['ALL'], region="eu-west-1"): | |

| rekognition = boto3.client("rekognition", region) | |

| response = rekognition.detect_faces( | |

| Image={ | |

| "S3Object": { | |

| "Bucket": bucket, | |

| "Name": key, | |

| } | |

| }, | |

| Attributes=attributes, | |

| ) | |

| return response['FaceDetails'] | |

| for face in detect_faces(BUCKET, KEY): | |

| print "Face ({Confidence}%)".format(**face) | |

| # emotions | |

| for emotion in face['Emotions']: | |

| print " {Type} : {Confidence}%".format(**emotion) | |

| # quality | |

| for quality, value in face['Quality'].iteritems(): | |

| print " {quality} : {value}".format(quality=quality, value=value) | |

| # facial features | |

| for feature, data in face.iteritems(): | |

| if feature not in FEATURES_BLACKLIST: | |

| print " {feature}({data[Value]}) : {data[Confidence]}%".format(feature=feature, data=data) | |

| """ | |

| Expected output: | |

| Face (99.945602417%) | |

| SAD : 14.6038293839% | |

| HAPPY : 12.3668470383% | |

| DISGUSTED : 3.81404161453% | |

| Sharpness : 10.0 | |

| Brightness : 31.4071826935 | |

| Eyeglasses(False) : 99.990234375% | |

| Sunglasses(False) : 99.9500656128% | |

| Gender(Male) : 99.9291687012% | |

| EyesOpen(True) : 99.9609146118% | |

| Smile(False) : 99.8329467773% | |

| MouthOpen(False) : 98.3746566772% | |

| Mustache(False) : 98.7549591064% | |

| Beard(False) : 92.758682251% | |

| """ |

| import boto3 | |

| BUCKET = "amazon-rekognition" | |

| KEY_SOURCE = "test.jpg" | |

| KEY_TARGET = "target.jpg" | |

| def compare_faces(bucket, key, bucket_target, key_target, threshold=80, region="eu-west-1"): | |

| rekognition = boto3.client("rekognition", region) | |

| response = rekognition.compare_faces( | |

| SourceImage={ | |

| "S3Object": { | |

| "Bucket": bucket, | |

| "Name": key, | |

| } | |

| }, | |

| TargetImage={ | |

| "S3Object": { | |

| "Bucket": bucket_target, | |

| "Name": key_target, | |

| } | |

| }, | |

| SimilarityThreshold=threshold, | |

| ) | |

| return response['SourceImageFace'], response['FaceMatches'] | |

| source_face, matches = compare_faces(BUCKET, KEY_SOURCE, BUCKET, KEY_TARGET) | |

| # the main source face | |

| print "Source Face ({Confidence}%)".format(**source_face) | |

| # one match for each target face | |

| for match in matches: | |

| print "Target Face ({Confidence}%)".format(**match['Face']) | |

| print " Similarity : {}%".format(match['Similarity']) | |

| """ | |

| Expected output: | |

| Source Face (99.945602417%) | |

| Target Face (99.9963378906%) | |

| Similarity : 89.0% | |

| """ |

| import boto3 | |

| BUCKET = "amazon-rekognition" | |

| KEY = "test.jpg" | |

| IMAGE_ID = KEY # S3 key as ImageId | |

| COLLECTION = "my-collection-id" | |

| # Note: you have to create the collection first! | |

| # rekognition.create_collection(CollectionId=COLLECTION) | |

| def index_faces(bucket, key, collection_id, image_id=None, attributes=(), region="eu-west-1"): | |

| rekognition = boto3.client("rekognition", region) | |

| response = rekognition.index_faces( | |

| Image={ | |

| "S3Object": { | |

| "Bucket": bucket, | |

| "Name": key, | |

| } | |

| }, | |

| CollectionId=collection_id, | |

| ExternalImageId=image_id, | |

| DetectionAttributes=attributes, | |

| ) | |

| return response['FaceRecords'] | |

| for record in index_faces(BUCKET, KEY, COLLECTION, IMAGE_ID): | |

| face = record['Face'] | |

| # details = record['FaceDetail'] | |

| print "Face ({}%)".format(face['Confidence']) | |

| print " FaceId: {}".format(face['FaceId']) | |

| print " ImageId: {}".format(face['ImageId']) | |

| """ | |

| Expected output: | |

| Face (99.945602417%) | |

| FaceId: dc090f86-48a4-5f09-905f-44e97fb1d455 | |

| ImageId: f974c8d3-7519-5796-a08d-b96e0f2fc242 | |

| """ |

| import boto3 | |

| BUCKET = "amazon-rekognition" | |

| KEY = "search.jpg" | |

| COLLECTION = "my-collection-id" | |

| def search_faces_by_image(bucket, key, collection_id, threshold=80, region="eu-west-1"): | |

| rekognition = boto3.client("rekognition", region) | |

| response = rekognition.search_faces_by_image( | |

| Image={ | |

| "S3Object": { | |

| "Bucket": bucket, | |

| "Name": key, | |

| } | |

| }, | |

| CollectionId=collection_id, | |

| FaceMatchThreshold=threshold, | |

| ) | |

| return response['FaceMatches'] | |

| for record in search_faces_by_image(BUCKET, KEY, COLLECTION): | |

| face = record['Face'] | |

| print "Matched Face ({}%)".format(record['Similarity']) | |

| print " FaceId : {}".format(face['FaceId']) | |

| print " ImageId : {}".format(face['ExternalImageId']) | |

| """ | |

| Expected output: | |

| Matched Face (96.6647949219%) | |

| FaceId : dc090f86-48a4-5f09-905f-44e97fb1d455 | |

| ImageId : test.jpg | |

| """ |

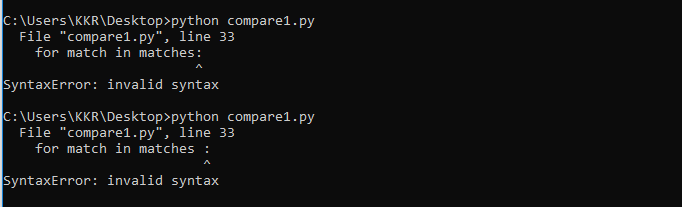

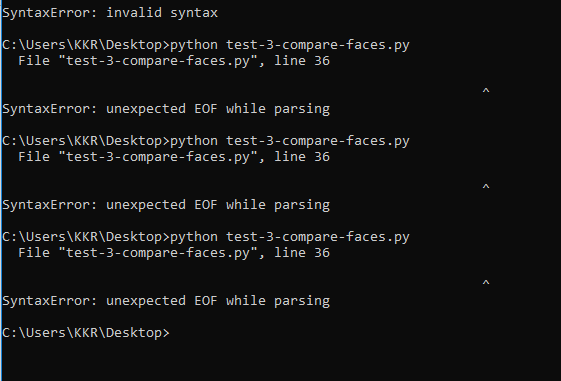

Hi @koustubha26,

The second and third errors seem to be just Python syntax errors, but they look fixed in the code screenshot you shared.

The first error (NoCredentialsError) happens because you haven't properly configured the AWS CLI (or boto3). You could either provide AWS credentials to the boto3 client (discouraged, but check this out) or properly configure environment variables (OK) or named profiles (better!).

Thanks a lot for your guidance..I am able to compare images now..

Actually, I am working on Smart Doorbell project. Its not a real-time project. Only simulation is there. Initially, I had to perform the operation of comparing two images to check whether the guest is valid or not. Then, later after comparing, a notification has to be sent to the owner of the house in the form of email...Before that, I have to store a database of existing images and then compare with image of the guest.

Could you kindly help me in achieving two tasks:

- Comparing the image of the guest with all the images existing in the S3 database.

- After comparison, a notification has to be sent to the owner of the house through email or WhatsApp whichever is convenient.

Kindly provide guidance regarding this as early as possible as I have course project to be presented on Monday. I am occupied with assignments during the weekend. Hope you understand.

Thanking you in advance.

Hi @koustubha26, I'm glad we managed to solve your problem.

- You can use Amazon Rekognition's IndexFaces and SearchFacesByImage APIs. The first one will store and index your dataset of faces (no need to manually use S3). The second will compare a given image to the currently indexed dataset (that could evolve over time).

- I would recommend using Amazon SNS to send simple email notifications. If you need more complex email templates, I would use a third-party service such as Mailchimp or Mailgun (which provide RESTful APIs so you can send transactional emails from your Lambda Functions).

Anyways, please feel free to join this Slack so we can chat there in case you'll need further guidance (Gists are not really meant to become personal forums).

Hi @koustubha26, I understand you've been assigned very little time for such a complex problem.

Unfortunately, I am not going to write code on your behalf, and I personally think it would be borderline cheating. My honest and genuine suggestion is to learn as much as you can until Monday, try to get there the hard way, and then simply show the code you've got so far. Even if not perfect, you'll be able to explain it and be proud of it :)

Hi alexcasalboni, In the "indexfaces" program, could you please tell me what is meant by collection and how to create a collection?? Can I consider a collection as equivalent to a database. I will atleast try to show the indexfaces code during the presentation.

how to pass image name when S3 trigger Lambda Function?

@Dingjian412 When you trigger Lambda, the event has all the details of the object. You can extract the name from it.

Thanks @alexcasalboni for this!

Hi @maithily1905,

The problem is that % indicates a placeholder (e.g. %s for strings, %d for numbers, etc.). If you want to print the % symbol you need to escape it as %% :)

StackOverflow answer here: https://stackoverflow.com/a/28343785

-------------------------------------------------------HELP--------------------------------------------------------------------------------------

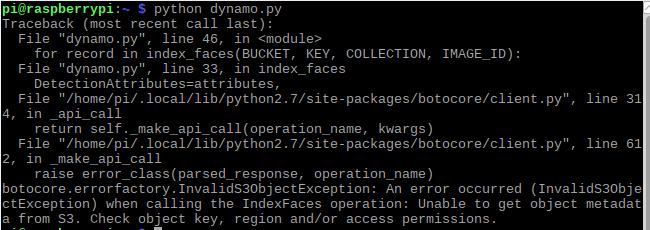

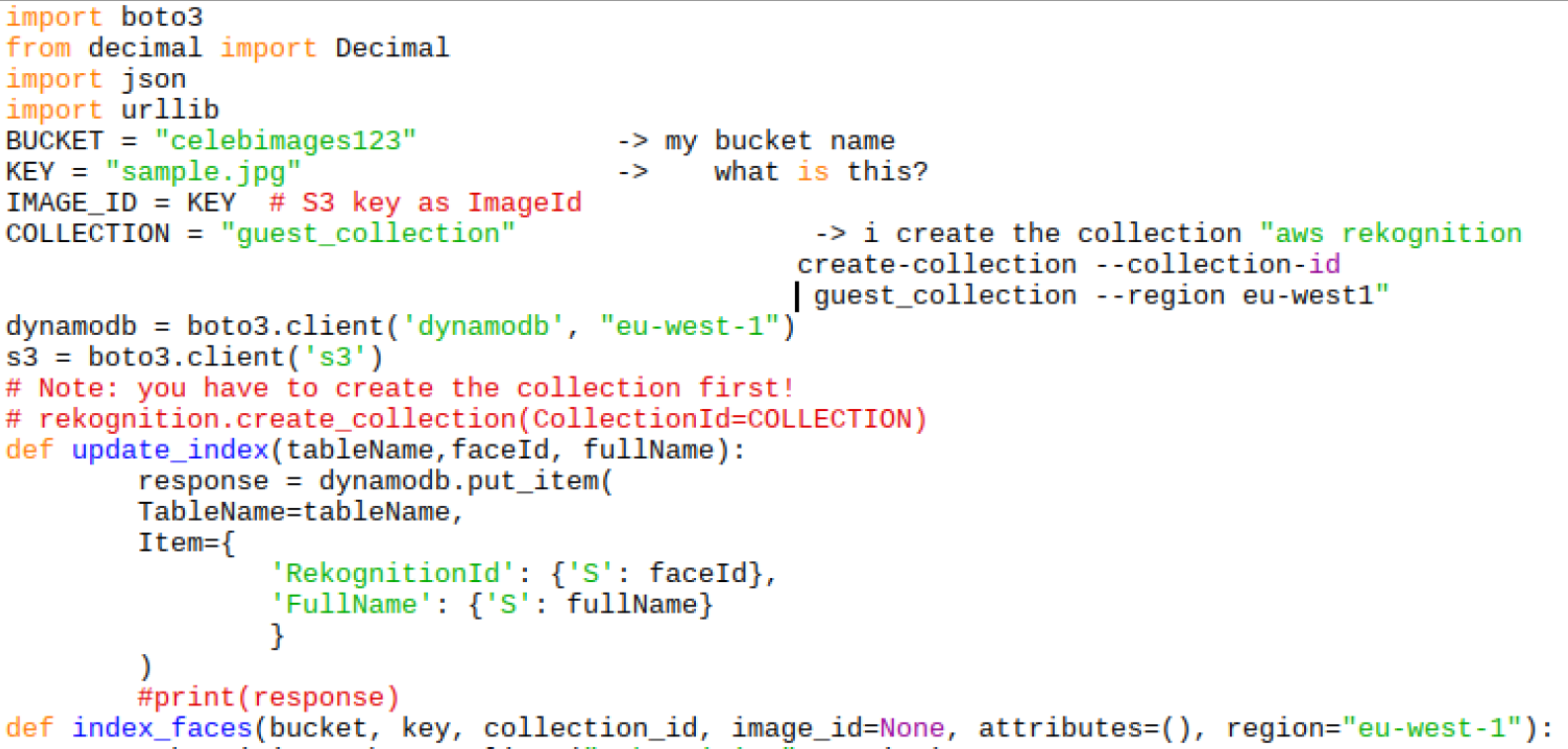

Hi, I am trying to execute the code with Python as mentioned above. My bucket name is "celebimages123" . and i create collectionID "guest_collection" and my dynamodb table name is "guest_collection" . While executing, I am experiencing errors every time as shown below. Can you help me?

Hi @qu2toy, maybe you have another region like us-east-1. and you must create de collection in that region :

collectionId='MyCollection'

region="eu-west-1"

client=boto3.client("rekognition", region)

#Create a collection

print('Creating collection:' + collectionId)

response=client.create_collection(CollectionId=collectionId)

Hope to help you!

[ERROR] ParamValidationError: Parameter validation failed:

Invalid type for parameter Image.S3Object.Bucket, value: {'key1': 'value1', 'key2': 'value2', 'key3': 'value3'}, type: <class 'dict'>, valid types: <class 'str'>

Invalid type for parameter Image.S3Object.Name, value: <bootstrap.LambdaContext object at 0x7f6ec3a2cc50>, type: <class 'bootstrap.LambdaContext'>, valid types: <class 'str'>

Traceback (most recent call last):

File "/var/task/lambda_function.py", line 16, in detect_faces

Attributes=attributes

File "/var/runtime/botocore/client.py", line 320, in _api_call

return self._make_api_call(operation_name, kwargs)

File "/var/runtime/botocore/client.py", line 596, in _make_api_call

api_params, operation_model, context=request_context)

File "/var/runtime/botocore/client.py", line 632, in _convert_to_request_dict

api_params, operation_model)

File "/var/runtime/botocore/validate.py", line 291, in serialize_to_request

raise ParamValidationError(report=report.generate_report())

END RequestId: 51e16fc4-6229-4e76-82ed-a1de3f2aa8f8

Thanks for this.

Here's my python 3 implementation which also supports passing in a file pointer, as well as an S3 bucket. I've only implemented the method for detect_labels.

Code

class Rekognition():

def __init__(self, session, max_labels = 10, min_confidence = 80):

self.rek_cli = session.client('rekognition')

self.max_labels = max_labels

self.min_confidence = min_confidence

def labels_from_s3(self, bucket_str, key):

response = self.rek_cli.detect_labels(

Image=

{

"S3Object": {

"Bucket": bucket_str,

"Name": key,

}

},

MaxLabels=self.max_labels,

MinConfidence=self.min_confidence,

)

return response['Labels']

def labels_from_file(self, fp):

fp = fp.read()

response = self.rek_cli.detect_labels(

Image=

{

'Bytes': fp,

},

MaxLabels=self.max_labels,

MinConfidence=self.min_confidence,

)

return response['Labels']

Usage

Create a Rekognition object passing in a boto3.Session

r = Rekognition(session)

Rekognise an image from an S3 bucket:

result = r.labels_from_s3('your-bucket', 'your-key)

Rekognise an image from a file pointer.

im = open('any-old.jpg', 'rb')

result = r.labels_from_file(im)

Print the results:

for label in result:

print ("{Name} - {Confidence}%".format(**label))

Output

Field - 99.86431121826172%

Outdoors - 99.8351058959961%

Grassland - 99.8351058959961%

Nature - 99.07447052001953%

Plant - 95.42001342773438%

Grass - 95.42001342773438%

import boto3

BUCKET = "*****"

KEY_SOURCE = "photo.jpg"

KEY_TARGET = "grp1.jpg"

def compare_faces(bucket, key, bucket_target, key_target, threshold=80, region="eu-east-1"):

rekognition = boto3.client("rekognition", aws_access_key_id='',

aws_secret_access_key='',

region_name='us-east-1')

response = rekognition.compare_faces(

SourceImage={

"S3Object": {

"Bucket": bucket,

"Name": key,

}

},

TargetImage={

"S3Object": {

"Bucket": bucket_target,

"Name": key_target,

}

},

SimilarityThreshold=threshold,

)

return response['SourceImageFace'], response['FaceMatches']

source_face, matches = compare_faces(BUCKET, KEY_SOURCE, BUCKET, KEY_TARGET)

the main source face

print("Source Face ({Confidence}%)".format(**source_face))

one match for each target face

for match in matches:

print("Target Face ({Confidence}%)".format(**match['Face']))

print("Similarity : {}%".format(match['Similarity']))

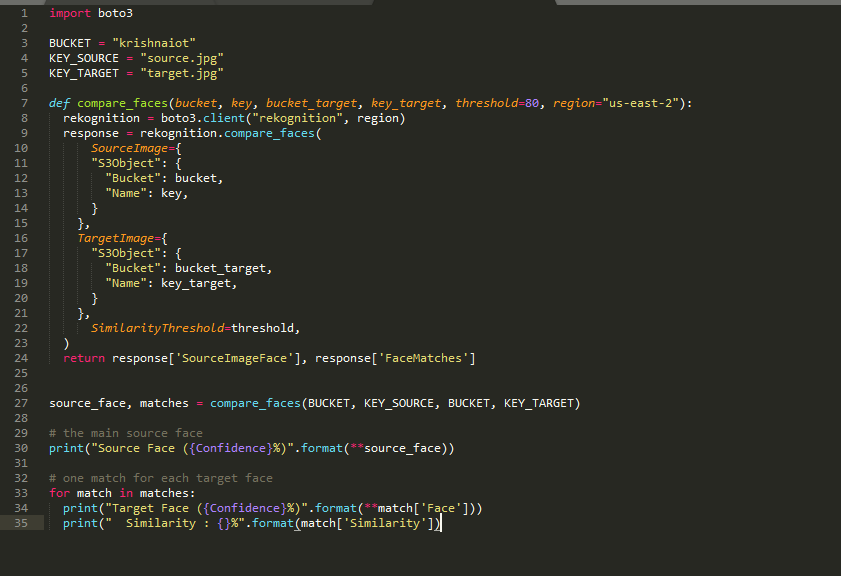

iam typing the above code and i am getting the following error even after giving public access to my s3 objects and bucket and full access permissions to amazon rekognition also can anyone please let me know the permissions

But still i am facing this error kindly give me the solution:

ERROR:

" botocore.errorfactory.InvalidS3ObjectException: An error occurred (InvalidS3ObjectException) when calling the CompareFaces operation: Unable to get object metadata from S3. Check object key, region and/or access permissions. "

import boto3

BUCKET = "xxxx"

KEY_SOURCE = "xxx.jpg"

KEY_TARGET = "xxxx1.jpg"

def compare_faces(bucket, key, bucket_target, key_target, threshold=80, region="xxxxx"):

rekognition = boto3.client("rekognition", region)

response = rekognition.compare_faces(

SourceImage={

"S3Object": {

"Bucket": bucket,

"Name": key,

}

},

TargetImage={

"S3Object": {

"Bucket": bucket_target,

"Name": key_target,

}

},

SimilarityThreshold=threshold,

)

return response['SourceImageFace'], response['FaceMatches']

source_face, matches = compare_faces(BUCKET, KEY_SOURCE, BUCKET, KEY_TARGET)

the main source face

print("Source Face ({Confidence}%)".format(**source_face))

one match for each target face

for match in matches:

print("Target Face ({Confidence}%)".format(**match['Face']))

print("Similarity : {}%".format(match['Similarity']))

This one should work...replace xxx with all your credentials

Hello,

I am very new to all of this so this may be a silly questions but when I try and run the facial comparison code I get the following error:

ClientError: An error occurred (UnrecognizedClientException) when calling the CompareFaces operation: The security token included in the request is invalid.

I have set up and IAM user "admin" and given the user permissions for AmazonRekognizeFullAccess, AmazonS3ReadOnlyAccess and AdministratorAccess. I am running the code using Anadona/Spyder/Python3.7 and I have boto installed. How do you specify credentials so it can use Rekognize? or should I be running it some other way?

Hi @jessekotsch how did you configure your credentials?

If you have configured an AWS CLI profile and set it as the default profile, it should work.

Something like this:

export AWS_PROFILE=your-profile-nameHey, Thanks this is so helpful.

Any idea how i can parse(or filter) the response of detectLabel, say I would like to get images with no human instance.

@toto88 you can just remove them from the labels list in your favorite programming language (if you are using one of the available AWS SDK). The detectLabel response is just a JSON object so you should be able to parse it with any JSON parser out there, exclude the "Person"/"People"/"Human" labels and use/store all the others.

@alexcasalboni thanks for your reply, how i can get the full list of labels, I have checked the Q&A page, it's just have some examples. https://aws.amazon.com/rekognition/faqs/

@toto88 unfortunately there is no public list of labels available yet.. Rekognition uses thousands of labels and the model is updated periodically under the hood.. for now, the best way to know if a given label is recognized is to submit a few images with that object/entity and verify it directly :)

@alexcasalboni thanks for all your effort! I tried your script but having an issue with print. Do you have any idea what I can do? Also, MaxLables and MinConfidence does not seem to work either.

@sunhoro I bet you're using Python3 :) You just need to convert the script to Python 3 (hint: use the print function, with parenthesis).

The face emotion part displays all the types of available emotions. How can I display the emotion with highest confidence?

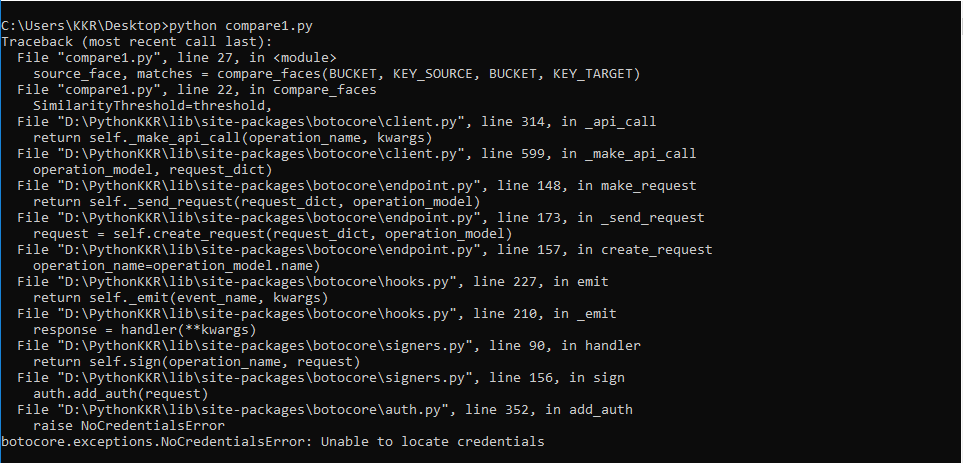

---------------------------------HELP REGARDING COURSE PROJECT-----------------------------------------------

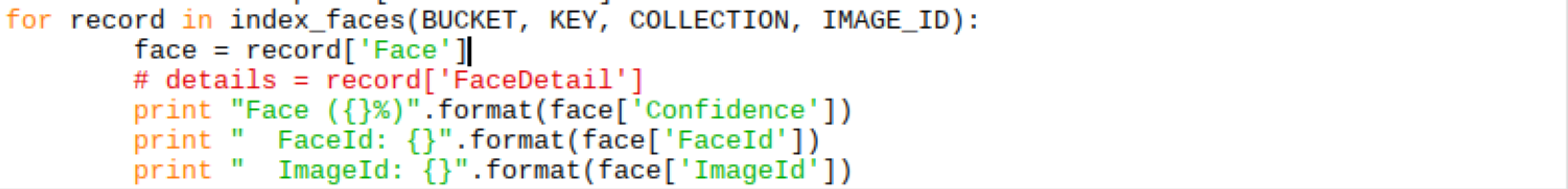

Hi, I have a course project to be presented on coming Monday. I am trying to execute the code pertaining to comparing faces in AWS using Python as mentioned above. My bucket name is "krishnaiot" and I have executed the code as shown in "comparingcode" screenshot. While executing, I am experiencing one of the three errors every time as shown below. Kindly provide guidance regarding this as early as possible. Kindly let me know if I am missing any other variables.