-

-

Save bshambaugh/557947afd4f9c7d6b22bcbf0b9e46f0a to your computer and use it in GitHub Desktop.

| I worked with this model during the EthDenver https://github.com/spro/char-rnn.pytorch . I did not manage to get the right shape for https://github.com/zkonduit/pyezkl/tree/main/examples/tutorial . (loading the model to convert to *.onnx) | |

| I learned a bit about ML though. :P | |

| You | |

| 11:48 AM | |

| https://gist.github.com/bshambaugh/af01e7366ac7f43abd8295533703d990 (other scratch work) | |

| .... | |

| You | |

| 11:51 AM | |

| Here is my colab python sketches: https://colab.research.google.com/drive/1mi2Rcehz-YWOeXGBNjCe-COR5XhnfPTB |

RNN clearly explained: https://www.youtube.com/watch?v=AsNTP8Kwu80 .

A guide on character level RNN: https://edumunozsala.github.io/BlogEms/fastpages/jupyter/rnn/lstm/pytorch/2020/09/03/char-level-text-generator-pytorch.html

Language Model for RNN: https://ppasumarthi-69210.medium.com/language-model-using-char-rnn-1df53f735880

Generating text with RNN: http://www.cs.utoronto.ca/~ilya/pubs/2011/LANG-RNN.pdf

RNN and Vanish

Perhaps this gives you the shape???

https://github.com/eliben/deep-learning-samples/blob/master/min-char-rnn/min-char-lstm.py

For reference, reworded:

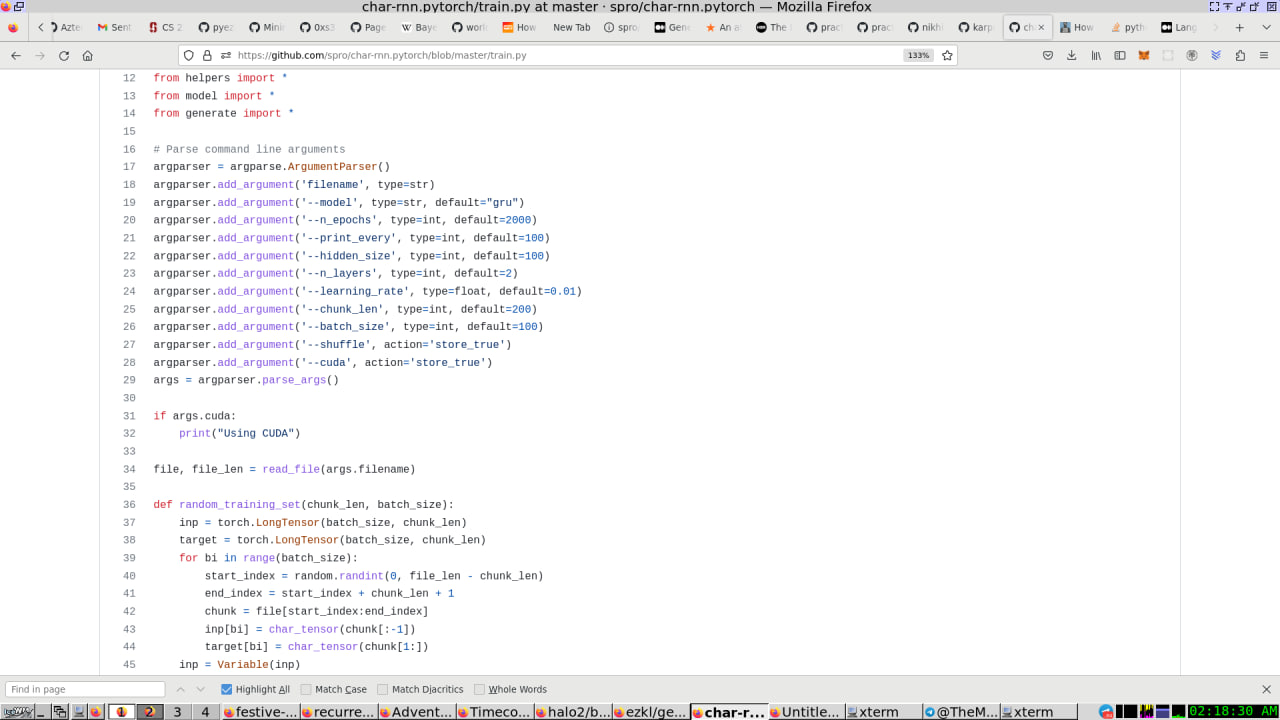

- https://github.com/spro/char-rnn.pytorch (see train.py and model.py) [Model we want to use]

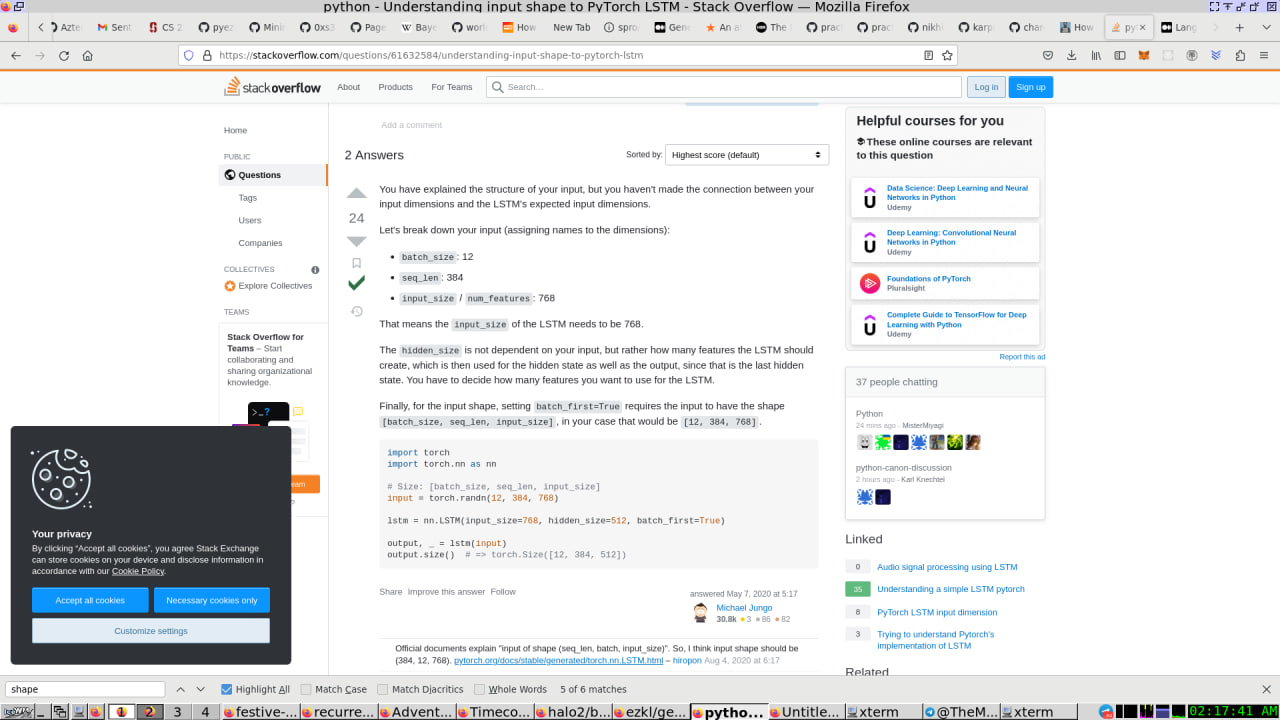

- https://stackoverflow.com/questions/61632584/understanding-input-shape-to-pytorch-lstm (# Size: [batch_size, seq_len, input_size] [shape that the model needs to be]

- https://pytorch.org/docs/stable/generated/torch.nn.LSTM.html, https://pytorch.org/docs/stable/generated/torch.nn.Embedding.html, https://pytorch.org/docs/stable/generated/torch.nn.GRU.html?highlight=gru#torch.nn.GRU, https://pytorch.org/docs/stable/generated/torch.nn.Linear.html?highlight=linear#torch.nn.Linear [supporting documents from pyTorch]

2a) Shape needs to fit into a script like this: https://github.com/zkonduit/ezkl/blob/main/examples/onnx/1l_relu/gen.py#L13

following this: https://github.com/zkonduit/pyezkl/tree/main/examples/tutorial

2b) Workflow needs to be:

"ezkl is a library and command-line tool for doing inference for deep learning models and other computational graphs in a zk-snark. It enables the following workflow:

Define a computational graph, for instance a neural network (but really any arbitrary set of operations), as you would normally in pytorch or tensorflow.

Export the final graph of operations as an .onnx file and some sample inputs to a .json file.

Point ezkl to the .onnx and .json files to generate a ZK-SNARK circuit with which you can prove statements such as:

Brent Shambaugh, [3/2/23 11:02 AM]

Jason's comments about RNN Model from before:

- 2 Inputs

- What are the shapes of the inputs

Brent Shambaugh, [3/2/23 11:07 AM]

- Is the Model that we want to use.

- Is the likely shape of the Model that we want to use that will be processed by ezkl/pyezkl

- is additional information to explain the model that we want to use

2a) Example of an export script for ezkl, but it may be the wrong shape.

2b) process for creating a custom shape for ezkl

Brent Shambaugh, [3/2/23 11:09 AM]

2b) [x,y,z] should be [x,y]

Brent Shambaugh, [3/2/23 11:15 AM]

https://gist.github.com/bshambaugh/af01e7366ac7f43abd8295533703d990

Brent Shambaugh, [3/2/23 11:14 AM]

this is the likely script to create a shape

Brent Shambaugh, [3/2/23 11:15 AM]

I mean ONNX file

The models for https://github.com/nikhilbarhate99/Char-RNN-PyTorch and https://github.com/spro/char-rnn.pytorch are similar, so look at the documentation.

Brent Shambaugh, [3/2/23 11:55 AM]

https://gist.github.com/karpathy/d4dee566867f8291f086 (shortest version of char-rnn, use for inspiration as it is written in numpy, but not for implementation)

cross reference with https://www.cs.ox.ac.uk/people/nando.defreitas/machinelearning/ —> https://www.youtube.com/watch?v=56TYLaQN4N8 (lecture 12) &&

Brent Shambaugh, [3/2/23 12:19 PM]

Train on this: https://pytorch.org/tutorials/beginner/nlp/sequence_models_tutorial.html (LSTMs in Pytorch. used in char-rnn)

Brent Shambaugh, [3/2/23 1:19 PM]

Exporting a file from PyTorch and Using it in ONNX Runtime: https://pytorch.org/tutorials/advanced/super_resolution_with_onnxruntime.html

Brent Shambaugh, [3/2/23 1:27 PM]

—-> additional ref: training LSTM: https://www.cs.ox.ac.uk/people/nando.defreitas/machinelearning/practicals/practical6.pdf

Brent Shambaugh, [3/2/23 1:28 PM]

—-> https://github.com/oxford-cs-ml-2015/practical6

Brent Shambaugh, [3/4/23 8:54 PM]

https://pytorch.org/docs/stable/generated/torch.nn.LSTM.html

Brent Shambaugh, [3/4/23 8:55 PM]

https://pytorch.org/docs/stable/generated/torch.nn.GRU.html?highlight=gru

Brent Shambaugh, [3/4/23 8:55 PM]

https://stackoverflow.com/questions/61632584/understanding-input-shape-to-pytorch-lstm

Jason, [2/24/23 1:01 PM]

https://github.com/zkonduit/ezkl

Jason, [2/26/23 3:34 PM]

https://github.com/nikhilbarhate99/Char-RNN-PyTorch/blob/master/CharRNN.py

Jason, [2/24/23 1:01 PM]

https://github.com/zkonduit/ezkl

Jason, [2/26/23 3:34 PM]

https://github.com/nikhilbarhate99/Char-RNN-PyTorch/blob/master/CharRNN.py

Jason, [2/26/23 3:34 PM]

This might be doable

Jason, [2/26/23 3:50 PM]

The original is here: https://github.com/karpathy/char-rnn

Brent Shambaugh, [3/1/23 5:10 PM]

https://gist.github.com/karpathy/d4dee566867f8291f086 (minimal RNN)

Brent Shambaugh, [3/1/23 5:36 PM]

https://github.com/spro/char-rnn.pytorch (also try tis one

Brent Shambaugh, [3/1/23 11:50 PM]

https://github.com/zkonduit/ezkl/blob/4a444b249396b6a09e4c323143f6daf1b71b4c92/README.md#onnx-examples

onnx examples

This repository includes onnx example files as a submodule for testing out the cli.

If you want to add a model to examples/onnx, open a PR creating a new folder within examples/onnx with a descriptive model name. This folder should contain:

an input.json input file, with the fields expected by the ezkl cli.

a network.onnx file representing the trained model

a gen.py file for generating the .json and .onnx files following the general structure of examples/tutorial/tutorial.py.

TODO: add associated python files in the onnx model directories.

It looks like the export function is failing because the shape of the input tensor is not matching the provided input_shape argument.

When calling the export function, you need to make sure that the input_array argument has the correct shape for the model input. In this case, the model input size is (batch_size, input_size), where batch_size is the number of input sequences and input_size is the length of each input sequence. So if you want to export a single input sequence with length 4, you should pass a 2D tensor with shape (1, 4).

Here's an example of how to do this:

lua

input_array = torch.tensor([[0, 1, 2, 3]])

hidden = rnn.init_hidden(1)

export(rnn, [1, 4], input_array, hidden)

This will export the model with an input tensor of shape (1, 4) and a batch size of 1. Note that you need to wrap the input values in a PyTorch tensor before passing them to the export function.

https://github.com/zkonduit/pyezkl/tree/main/examples/tutorial

As noted above this graph takes in 3 inputs and produces 2 outputs. The main function instantiates an instance of Circuit and saves it to an Onnx file.

def main():

torch_model = Circuit()

# Input to the model

shape = [3, 2, 2]

x = 0.1*torch.rand(1,shape, requires_grad=True)

y = 0.1torch.rand(1,shape, requires_grad=True)

z = 0.1torch.rand(1,*shape, requires_grad=True)

torch_out = torch_model(x, y, z)

# Export the model

torch.onnx.export(torch_model, # model being run

(x,y,z), # model input (or a tuple for multiple inputs)

"network.onnx", # where to save the model (can be a file or file-like object)

export_params=True, # store the trained parameter weights inside the model file

opset_version=10, # the ONNX version to export the model to

do_constant_folding=True, # whether to execute constant folding for optimization

input_names = ['input'], # the model's input names

output_names = ['output'], # the model's output names

dynamic_axes={'input' : {0 : 'batch_size'}, # variable length axes

'output' : {0 : 'batch_size'}})

d = ((x).detach().numpy()).reshape([-1]).tolist()

dy = ((y).detach().numpy()).reshape([-1]).tolist()

dz = ((z).detach().numpy()).reshape([-1]).tolist()

data = dict(input_shapes = [shape, shape, shape],

input_data = [d, dy, dz],

output_data = [((o).detach().numpy()).reshape([-1]).tolist() for o in torch_out])

# Serialize data into file:

json.dump( data, open( "input.json", 'w' ) )

if name == "main":

main()

Running the file generate an .onnx file. Note that this also create the required input json file, whereby we use the outputs of the pytorch model as the public inputs to the circuit.

Brent Shambaugh, [3/2/23 12:04 AM]

here is what I am working with loading the .onnx

Brent Shambaugh, [3/2/23 1:37 AM]

maybe this says something about shapes: https://machinelearningmastery.com/reshape-input-data-long-short-term-memory-networks-keras/

Brent Shambaugh, [3/2/23 1:44 AM]

https://github.com/spro/char-rnn.pytorch/blob/master/model.py

Brent Shambaugh, [3/2/23 2:06 AM]

https://ppasumarthi-69210.medium.com/language-model-using-char-rnn-1df53f735880 (shapes?)

Brent Shambaugh, [3/2/23 2:11 AM]

https://stackoverflow.com/questions/61632584/understanding-input-shape-to-pytorch-lstm (# Size: [batch_size, seq_len, input_size])

Brent Shambaugh, [3/2/23 2:14 AM]

https://jvns.ca/blog/2020/11/30/implement-char-rnn-in-pytorch/ (another thing that might help find shapes) ... hint .... look at the training python code

Use this for inspiration: https://github.com/hunter-z-hunter/hunter-z-hunter/blob/main/hunter.ipynb

https://github.com/hunter-z-hunter/hunter-z-hunter/blob/main/setup/trainer.py (maybe this is useful?)

review this: https://github.com/zkonduit/ezkl