I used to be sceptic about ML in general.

- after University in 90th

- after Yandex School of Data Analysis in 2008

- after famous Andrew Ng's first course on Coursera in 2010

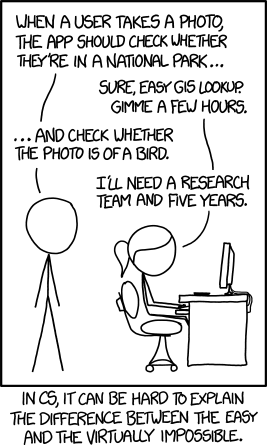

because who can think of an algorithm telling mom dog and her puppies

because it's all about span/not-spam or recognizing digits in MNIST dataset

- because spam detection sucks, recommendation systems sucks, anomaly detection, etc

because I had no clue how to debug it

because it's for fun, why should I care

(overheard on a meetup) because it's not fair: it's like you tell me that my chess etude can be solved by promoting all my pawns to queens. It's not the solution I'm looking for.

- let's see.

-

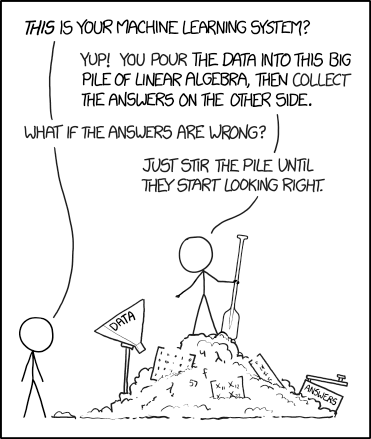

Regression and classification: neural network as a function f: X -> Y

- to be precise, a function f(meta_parameters, weights, X) -> Y

- http://playground.tensorflow.org/

-

Cost function, regularization

- C(y', y) -> R, y' = f(meta_parameters, weights, x), y is the 'label' value assigned to x. Shows 'how far' is the 'predicted' value y' from the desired value y.

-

Neural networks training

- https://ml4a.github.io/ml4a/how_neural_networks_are_trained/

- http://colah.github.io/posts/2014-03-NN-Manifolds-Topology/

- training set selection, keeping track of learning performance, pitfalls

-

Architectures THE NEURAL NETWORK ZOO

- MLP aka Fully Connected Layers

- CNN, Understanding Convolutions

- RNN

-

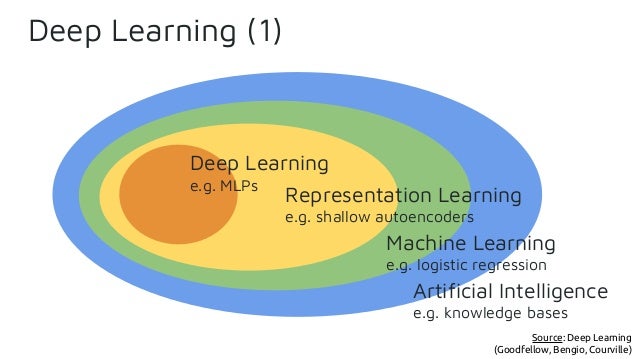

Supervised, unsupervised,

- self-supervised learning

- autoencoders

- https://sermanet.github.io/tcn/ Time-Contrastive Networks: Self-Supervised Learning from Multi-View Observation

- Split-Brain Autoencoders

- A brief introduction to weakly supervised learning

- https://en.wikipedia.org/wiki/Semi-supervised_learning

- https://arxiv.org/abs/1707.00600 Zero-Shot Learning - A Comprehensive Evaluation of the Good, the Bad and the Ugly

- https://arxiv.org/abs/1802.02871 Online Learning: A Comprehensive Survey

- https://en.wikipedia.org/wiki/Meta_learning_(computer_science) (aka learning to learn) Taxonomy of Methods for Deep Meta Learning

- self-supervised learning

-

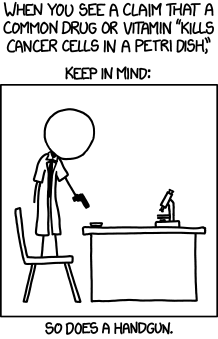

Funny facts

- Everything will work. NN is a universal approximator and whatever you start with will work (theoretically)

- Everything will work but there is a price: "No free lunch theorem" http://www.no-free-lunch.org/

- "More data beats clever algorithms, but better data beats more data." Peter Norvig

- Everything is fragile and works only by chance:

- Ensemble learning

-

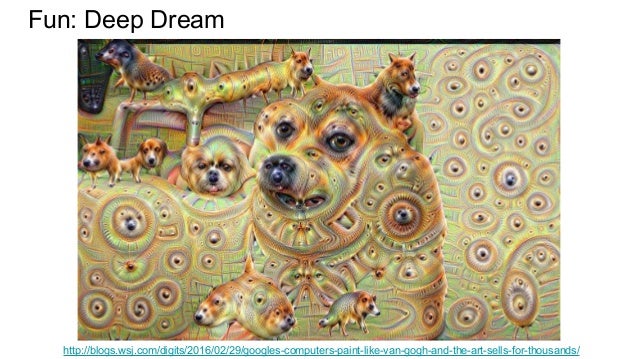

Why Deep Learning is Radically Different from Machine Learning

- Feature visualization vs saliency maps appendix

- http://yosinski.com/deepvis

- Picasso, https://github.com/merantix/picasso, https://arxiv.org/abs/1705.05627

- Visualizing Representations

- Toy and Real use cases

- image segmentation

- real-time video

- https://www.mpi-inf.mpg.de/departments/computer-vision-and-multimodal-computing/research/weakly-supervised-learning/

- http://openaccess.thecvf.com/content_cvpr_2017/papers/Khoreva_Simple_Does_It_CVPR_2017_paper.pdf Simple Does It: Weakly Supervised Instance and Semantic Segmentation

- https://www.mpi-inf.mpg.de/departments/computer-vision-and-multimodal-computing/research/weakly-supervised-learning/lucid-data-dreaming-for-object-tracking/ (yet another weak supervised learning example)

- style transfer

- Are we brave to look into it? What can we learn from here?

- object detection and tracking

- classification

- notMNIST

- http://yaroslavvb.blogspot.com/2011/09/notmnist-dataset.html intro and sample error rates in comments

- http://enakai00.hatenablog.com/entry/2016/08/02/102917 sample of images and results

- notMNIST

- regression

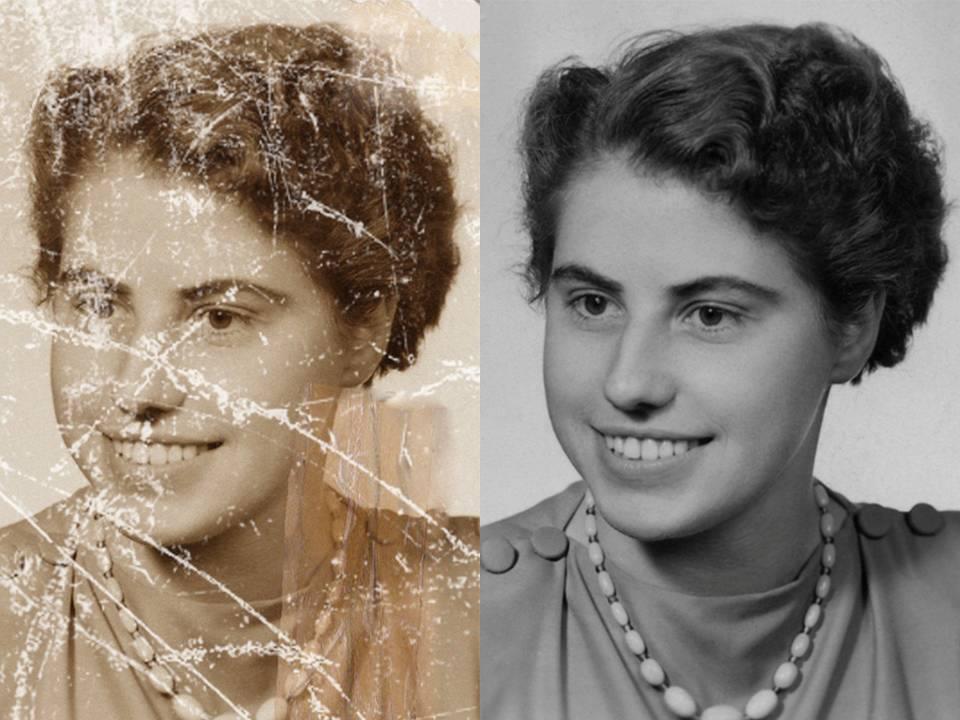

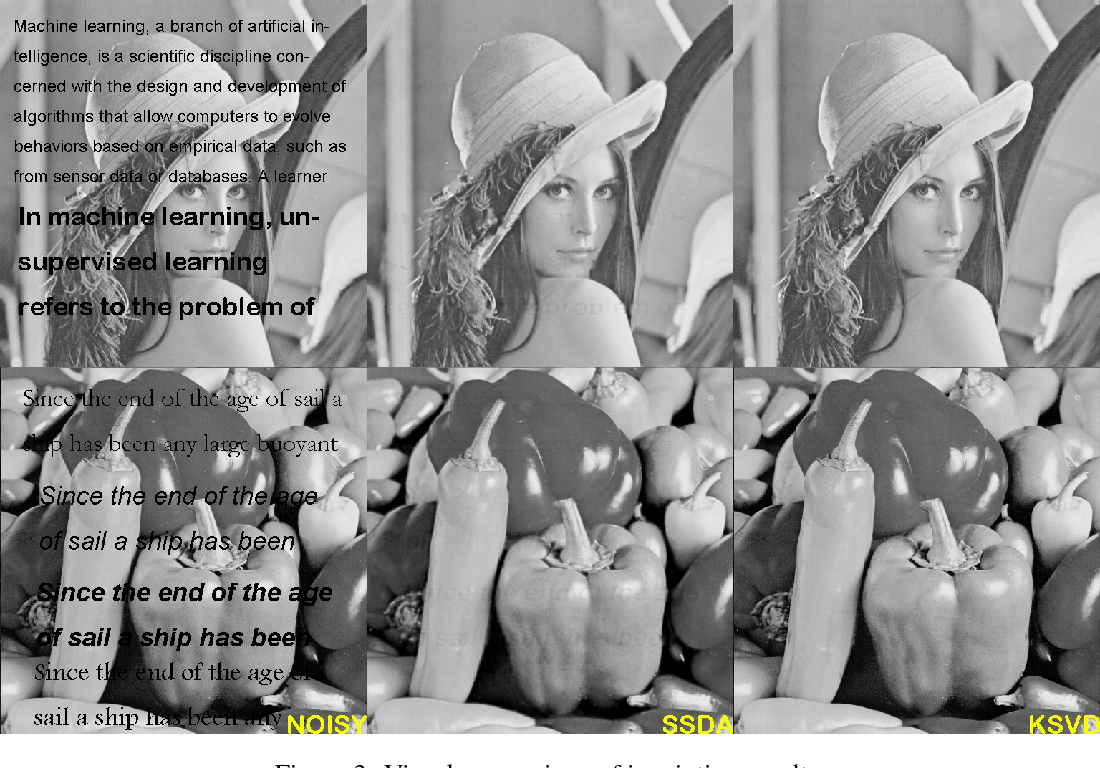

- denoising/restoration/inpainting

- superresolution

- dimension reduction (aka Manifold learning)

- http://scikit-learn.org/stable/modules/manifold.html

- t-SNE:

- http://colah.github.io/posts/2014-07-Conv-Nets-Modular/

- https://distill.pub/2016/misread-tsne/ We’ll walk through a series of simple examples to illustrate what t-SNE diagrams can and cannot show.

- attention

- generative networks

- anomaly detection

- sensor fusion https://reality.ai/

- mathematical simulation

- image segmentation

- Knowledge transfer and network's brain surgery

- Adversarial networks

- Domain adaptation

- How to learn DL (as a person, not as a network)

- Natural Stupidity is more Dangerous than Artificial Intelligence We collectively become dumber when we relinquish responsibility and accountability to the automation (or A.I.) that furnishes us with cognitive assistance.

- Google and Uber’s Best Practices for Deep Learning

- back to C.Olah's post (scroll to the section 'Unthinkable Thoughts, Incomprehensible Data')

- anecdote about transistors vs vacuum tubes debates in USSR of early 60th

- The major advancements in Deep Learning in 2015, 2016, 2017

- Common Misconceptions and Lessons Learned

- http://beamandrew.github.io/deeplearning/2017/06/04/deep_learning_works.html (especially the section 'Misconceptions On Why Deep Learning Works')

- http://hyperparameter.space/blog/when-not-to-use-deep-learning/

- Ten Myths About Machine Learning (advanced)

- Deep Misconceptions About Deep Learning

- 6* https://blog.openai.com/

- https://gym.openai.com/envs/#robotics ...

- https://blog.openai.com/universe/ ...

- https://blog.openai.com/ingredients-for-robotics-research/

- https://blog.openai.com/interpretable-machine-learning-through-teaching/

- https://blog.openai.com/preparing-for-malicious-uses-of-ai/

- https://blog.openai.com/generalizing-from-simulation/

- https://blog.openai.com/faster-robot-simulation-in-python/

- 6* https://www.kdnuggets.com/

- 6* http://colah.github.io/

- 5* https://distill.pub/

- 5* https://deepmind.com/blog/

- 5* https://medium.com/intuitionmachine

- 5* https://gumroad.com/l/WRbUs The Deep Learning AI Playbook

- 5* https://people.eecs.berkeley.edu/~svlevine/ Sergey Levine, Robotic Artificial Intelligence and Learning Lab

- 4* https://blog.google/topics/machine-learning/

- 4* Two Minute Papers youtube channel

- 7* http://cs224d.stanford.edu/index.html

- 6* http://scikit-learn.org/stable/documentation.html Classical 'Shallow' ML

- 5* http://www.fast.ai/ http://course.fast.ai/

- 5* Machine Learning for Artists