Sometimes you don't want to load all the data, only smaller pieces to get a better extract performance. Or maybe do a bigger load once a while and then do daily or hourly incremental extracts. Together with discarding extracts (to enforce a reload of fresh and not fixed or finished data), you can create a pretty powerful combination.

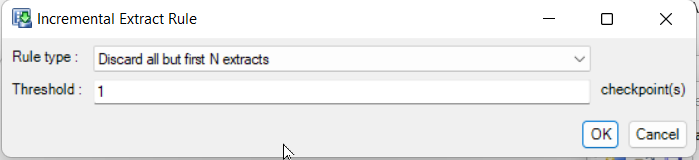

To get this done I have experimented with the different options. To do this I have extracted the training data in Designer and loaded it into a SQLITE database (with DB Browser). Then I have configured the following for bookings, because that should be loaded incremental.

- Custom Query

SELECT *

FROM bookings

WHERE BookingDate > @ERN

AND BookingDate <= CASE @ERN

WHEN - 1

THEN %BDATE%

ELSE strftime('%Y%m%d', 'now')

END

ORDER BY BookingDate ASC

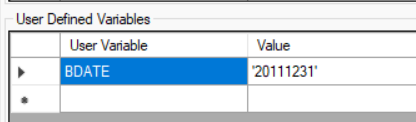

To get this done, we need the environment variable BDATE that defines a max date for the initial bigger load:

In this combination you can have a bigger load (but could also be multiple if you set the query and Incremental Extract Rules clever enough) and then always a load with all dates after this. After a while you can just automatically or manually delete the corresponding extract file, increase the environmental variable (that could be set through PowerShell) and force the system to reload everything until this date. This build can be on a regular schedule maybe once a month. This ensures also better performance in the following build as we already have some sorted data available.

To learn more about sqlite you can visit this help page.: https://help.apteco.com/designer/Content/Topics/Data%20Source/OtherDataSources.htm