I tried the new GPT-NeoX repository for the first time, and got it to train a model for a while. This is a report of the installation process I used once I had it working. I dunno, maybe this will be helpful to someone else. I didn't try using the deepspeed docker image.

For this test I used a GCE VM. The CPU/GPU settings were as follows:

The boot disk settings were as follows:

I also made sure the network settings allowed me to SSH to the VM from the outside.

By the way, on GCE, I can check my quota for GPUs with the following command line:

gcloud compute regions describe us-east1 --flatten 'quotas[]' --format 'table(quotas.metric,quotas.limit,quotas.usage)' | grep GPU

I logged into the VM via SSH. For the first login, I needed to use the command line, since on first login the VM asks the user to confirm installation of the NVIDIA drivers. But after that, I like to use the SSH remoting feature of VS Code, which involves the following steps:

- In a terminal, I ran

gcloud compute sshINSTANCE-NAME--dry-runand copied the SSH command that it printed:

- In VS Code, I installed the Remote-SSH extension. In the Remote Explorer tab of the sidebar, I picked "SSH Targets" from the dropdown. I pressed the plus sign to add a new Remote-SSH connection, and pasted in the SSH command I copied out of the terminal. VS Code automatically set up port forwarding and installed itself on the remote side. It will start you off with just a terminal, but once you have checked out the git repository on the VM, you can select the explorer in the sidebar and open the repository as a remote directory.

Upon first login, the VM will confirm that you want NVIDIA drivers installed. I chose to do so.

The boot disk of my VM came with Python 3.7.x preinstalled. This seemed satisfactory, so I did not install a newer version. (And although gpt-neox currently references Tensorflow 2.x, if I should want to use Tensorflow 1.x at some point, it is apparently only compatible with Python 3.7.x and earlier.)

The Triton component is indirectly referenced in the Python requirements, and it requires the LLVM development package to be installed. After trying various versions of LLVM, it seems that only version 9 works successfully. This package is apparently not available from the default Debian package repository. To install it from the LLVM download site, follow the following steps:

-

curl https://apt.llvm.org/llvm.sh > /tmp/llvm.sh -

chmod +x /tmp/llvm.sh -

sudo /tmp/llvm.sh 9

This installation step generated some errors concerning ldconfig finding some NVIDIA libraries installed directly rather than via symbolic links. I assume this is because the NVIDIA driver installer does not follow some convention assumed by ldconfig. I presume these errors are harmless.

Next, I cloned the GPT-NeoX repository from Github:

-

git clone https://github.com/eleutherai/gpt-neox -

cd gpt-neox

Then I created a Python virtual environment. This keeps the installed Python libraries local to the repository working directory rather than polluting the global Python installation of the VM. It also makes it easy to cleanly remove and reinstall the python libraries if something seems to be wrong. To create the virtual environment, I ran the following commands:

-

python -m venv .env -

source .env/bin/activate -

grep -q ^.env/ .gitignore || echo .env/ >> .gitignore

Next, I installed VS Code's Python support on the VM, by switching to the Extensions tab of the sidebar, and pressing the 'Install in SSH' button next to the Python extension.

At this point, VS Code recognized that a Python virtual environment was present in the working directory (possibly I had to open a Python source file first), and started up its Python support using the virtual environment's Python interpreter. This should make VS Code's Python code navigation and linting functionality work properly. It is also helpful to add the following line to VS Code's settings on the remote side, to prevent it from exhausting the Linux kernel's limit on the number of files that can be watched (because the .env directory hierarchy will end up having so many files in it).

Next, I installed the Python requirements. I found that I had to install pytorch separately first, or the triton installation would fail. Possibly triton is not packaged for Python particularly well.

-

pip install 'torch>=1.6' -

pip install -r requirements.txt

I started a training run using the example command from the repository readme:

deepspeed train_enwik8.py --deepspeed --deepspeed_config ./configs/base_deepspeed.json

This started printing a bunch of progress information into the terminal.

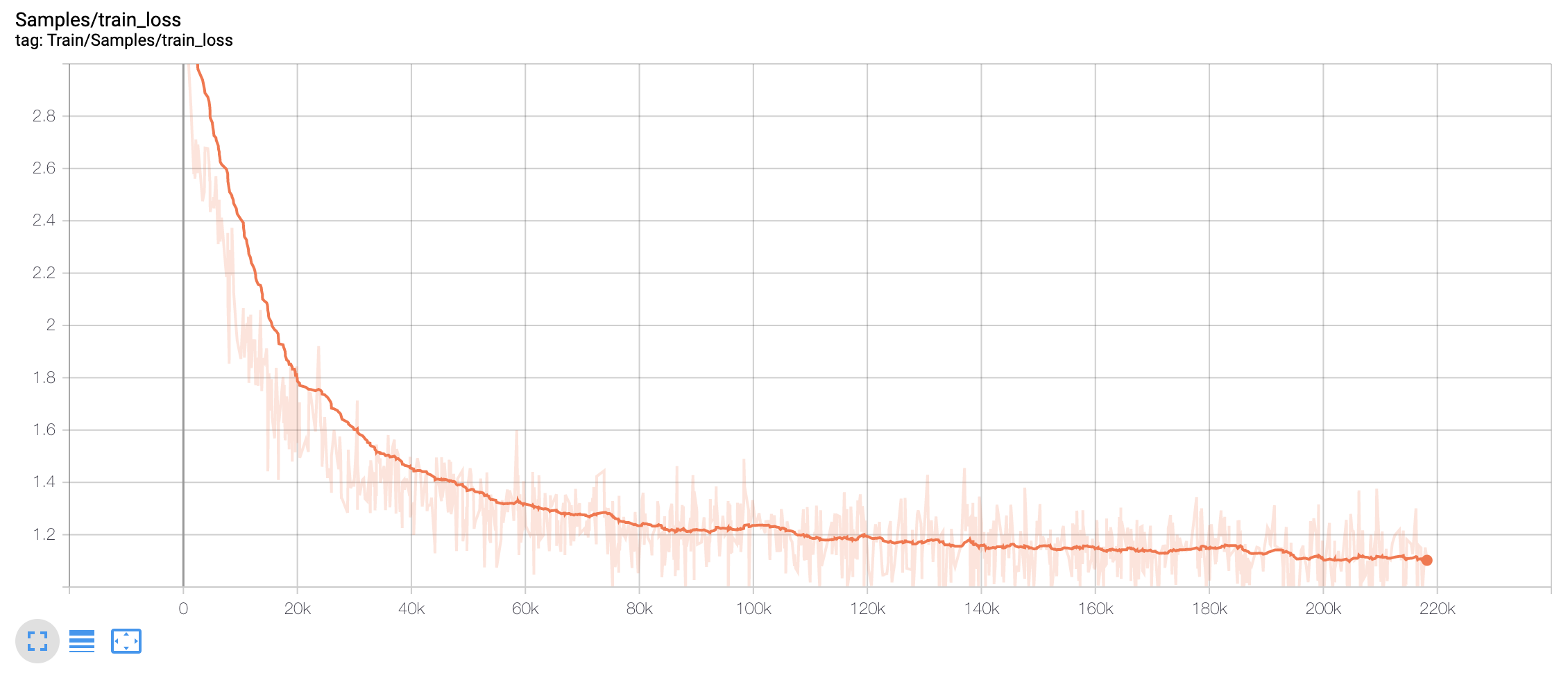

In a second terminal, I started tensorboard with:

tensorboard --logdir logs

The VS Code remoting support detected that port 6006 was now listened to on the remote side, and offered to forward it through the SSH connection for me and open that page in my browser, which I did. I saw the progress of the training (but for some reason it only showed the training set loss, not the validation set loss, even though validation loss was being printed in my terminal).

One interesting observation was the following message printed to the terminal. I don't know what it means.

[2021-01-05 04:33:54,160] [INFO] [stage2.py:1361:step] [deepscale] OVERFLOW! Rank 0 Skipping step. Attempted loss scale: 65536.0, reducing to 32768.0