This is a quick Python script I wrote to download HumbleBundle books in batch. I bought the amazing Machine Learning by O'Reilly bundle. There were 15 books to download, with 3 different file formats per book. So I scratched a quick script to download all of them in batch.

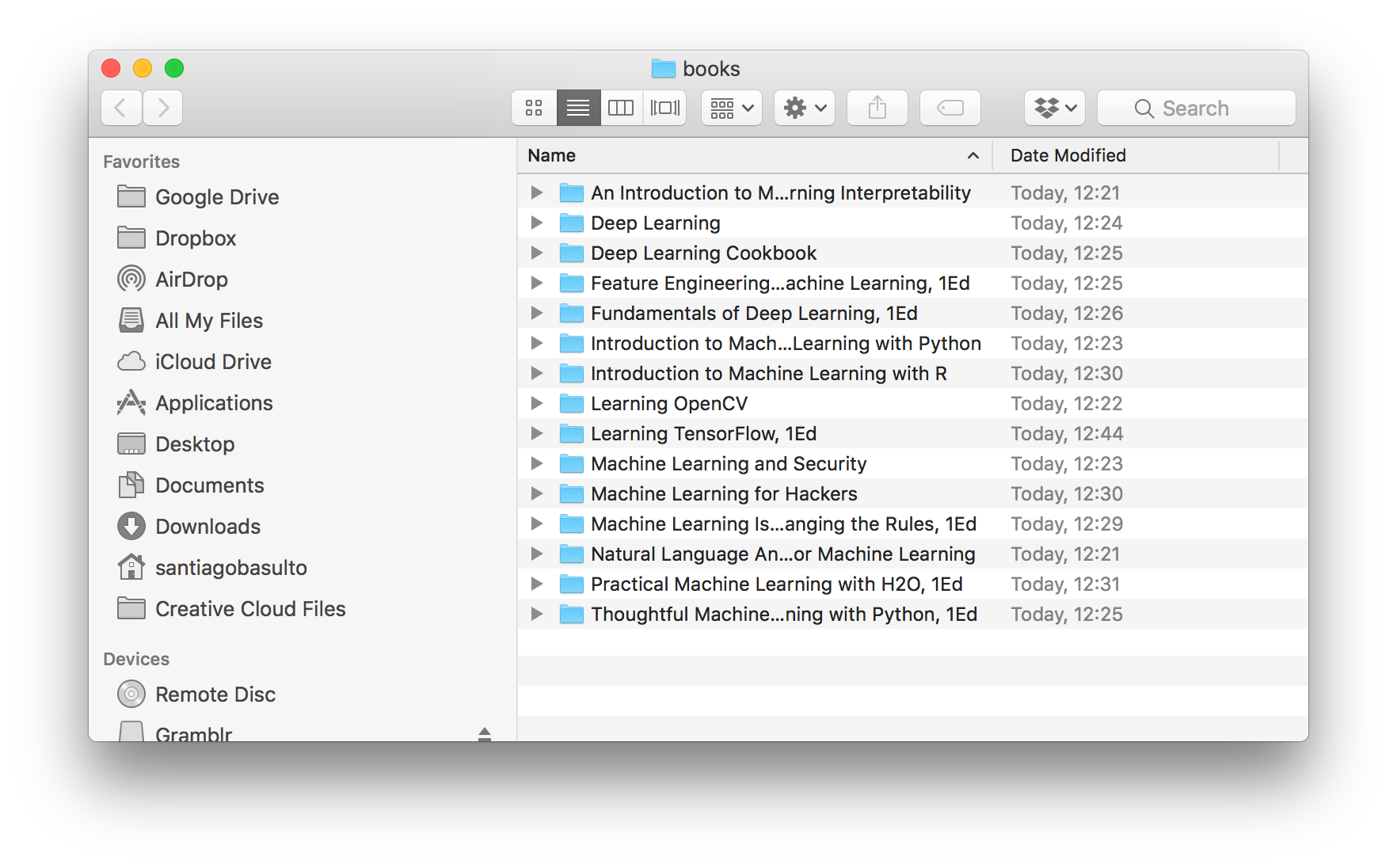

(Final Result: books downloaded)

It's a simple script, the only problem is extracting the generated HTML from Humble Bundle. Here is a step by step guide:

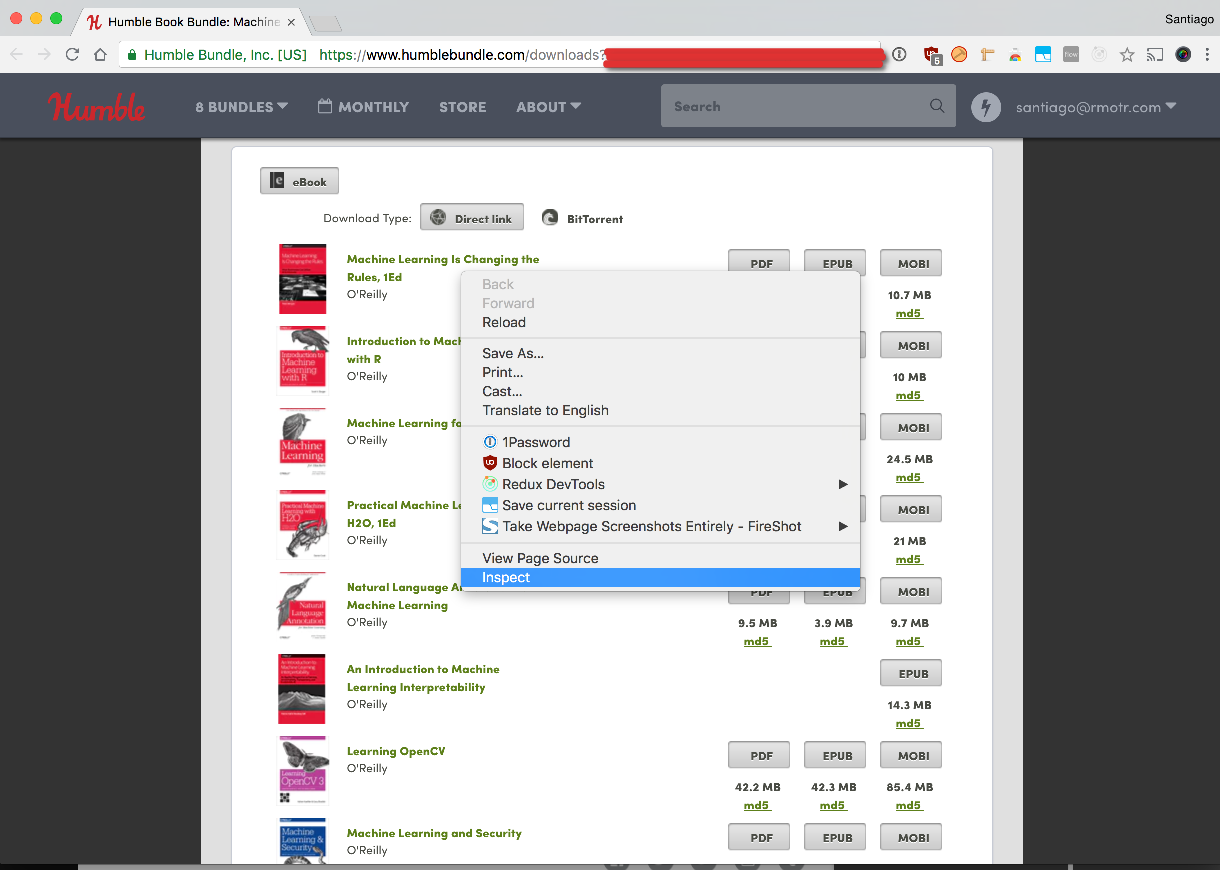

After your purchase, open the download page:

This is how mine looks like

I'm using Chrome, but Firefox also works for this. Right click anywhere on the page and click on "Inspect Element":

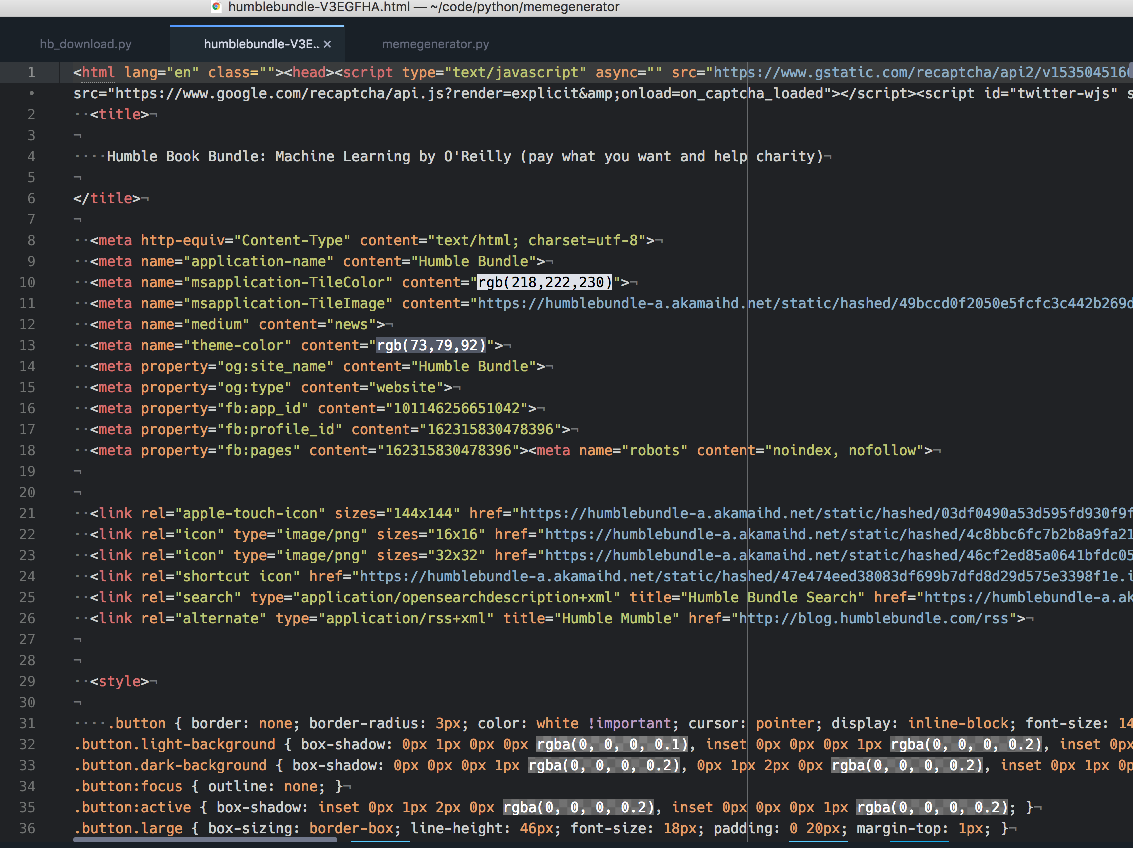

Once you click on Inspect, the developer window should pop up:

Scroll up until you see the initial <html> element. Once you've identified it, right click on it and do: Copy > Copy Element

Create a new file in your favorite editor and paste the contents that you've just copied from the previous step.

Use a good name for the html file because we'll use it next. For example: humble_bundle_ml.html

Important: this script requires Python 3

Now you're ready to download those books. In your command line tool, create a virtualenv and install dependencies:

$ pip install beautifulsoup4 requestsNow you can invoke the actual command:

$ python hb_download.py humble_bundle_ml.html --epub --pdfBy default it'll download the books in a directory named books/. You can change that with the -d command.

❯ python hb_download.py --help

usage: hb_download.py [-h] [-d DESTINATION_DIR] [--epub] [--pdf] [--mobi]

html_file

Download

positional arguments:

html_file HTML file to download books from

optional arguments:

-h, --help show this help message and exit

-d DESTINATION_DIR, --destination-dir DESTINATION_DIR

Directory where books will be saved

--epub

--pdf

--mobi

Dear Santiago,

I would really like to get this to work, it seems like a great project with a pretty simple interface. However, I do run into problems, and the script carps with the following error:

Also, I got a warning regarding soup fallback/default to LXML, but that can be avoided adding a second argument "lxml" to BeautifulSoup() call in line 10.

I know quite a bit about programming and Python, but I am not at all proficient in BeautifulSoup. For instance, I do not know exactly how your script deals with the login procedure to HumbleBundle.

I have introduced to changes suggested by @Susensio and @GhostofGoes, but that did not seem to change anything in the present case.

All help is most welcome.

EDIT:

Changing (around line 50)

with requests.get(url, stream=True) as resp:toresp=requests.get(url, stream=True)- and re-identing the following line fixed the above problem for me. However, at this point all downloads are zero-size (empty) files. So, subdirs for books are created, and files too, but no content is actually downloaded.I suspect that this has to do with login, but I am not sure how the login is performed with this py-script. Trying to use the URLs directly with eg

wget, just results inERROR 403: Forbidden, so presumably the login-cookie needs to be copied over from the Chrome session as well, and somehow included in the soup session.PS: If you - at some point - figure out to check the md5sums, then it will be a great addition too.