The actual code represents the work performed by Sofia Strukova during Google Summer of Code (GSoC) 2021, as part of the ML4SCI organization. It was performed under the supervision of Patrick Peplowski (JHUAPL), Sergei Gleyzer (University of Alabama) and Jason Terry (University of Georgia).

The code of the work described hereby can be found in the following open-source repository: link

The experiments presented here were done with the use of the following datasets.

For the Moon:

- albedo map

- LPFe (iron map)

- LPK (potassium map)

- LPTh (thorium map)

- LPTi (titanium) map.

For Mercury:

- albedo map

- Al to Si element ratio

- Ca to Si element ratio

- Fe to Si element ratio

- Mg to Si element ratio

- S to Si element ratio.

The goal of the project is to use machine learning techniques to identify relationships between planetary mapped datasets, with the purpose of providing a deeper understanding of planetary surfaces and to have predictive power for planetary surfaces with incomplete datasets.

Planetary surfaces can be observed through electromagnetic wavelengths (e.g., radar, infrared, optical, ultraviolet, x-ray, gamma-ray). Each wavelength provides unique information about the surface's chemistry, mineralogy, and history. Yet, the information is not entirely independent. For example, the chemical element iron, which is mapped with x-rays and gamma rays, is highly related to optical albedo on the Moon. Knowing this, we can develop high-spatial-resolution predictive maps of iron based on optical data. On other planets, the relationships between the observations are less well-known, and some datasets are missing. We seek to study planetary surfaces by inputting maps of surfaces at all wavelengths available to discover the relationships between the measurements and to make predictions about chemistry that are not directly sampled by observations. This provides a way of studying the geologic history of a planet with existing data, which is valuable given the infrequent opportunities for new measurements by planetary spacecraft.

Before the official start of the project, I explored the relationship between high iron and dark regions on the Moon. I built a regression model for the Lunar albedo based on the chemical composition data from the Lunar Prospector to predict the brightness of each pixel. The model performed with high accuracy - 78%. This was expected in the step of evaluating the correlationships - albedo and elemental composition are highly correlated on the Moon. Secondly, I attempted to build a predictive model of albedo versus composition. It turned out to be more complex in this case since the elemental maps have coverage gaps. The correlationships between albedo and chemicals are really low so trying to predict one with respect to the other offered poor performance.

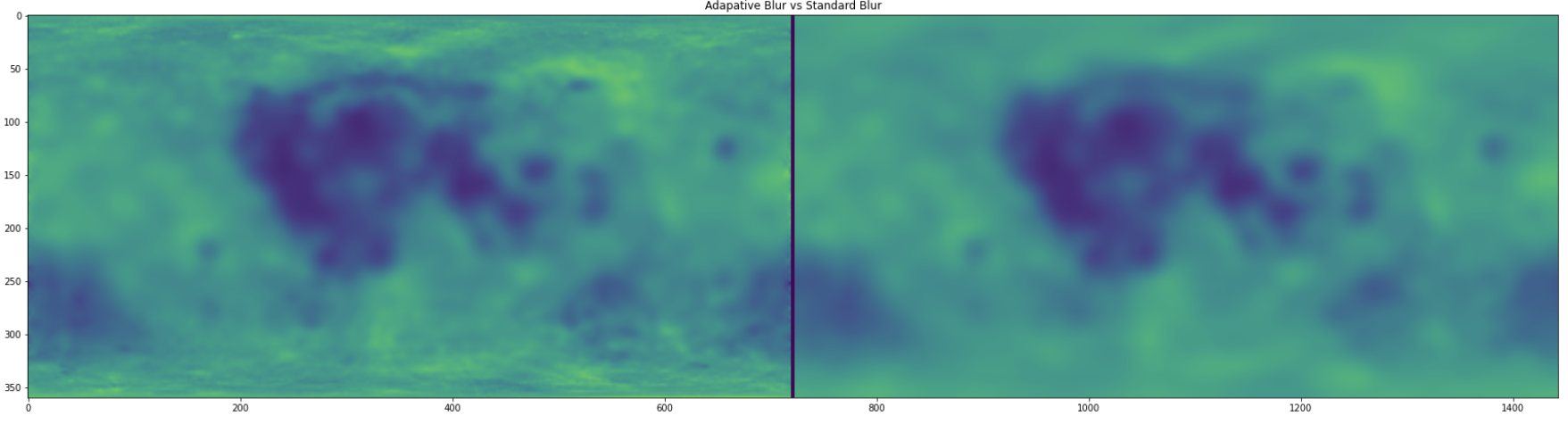

The reasoning why we wanted to perform two settings (with and without blurring) is described as follows. In our experiment, we are comparing two different classes of data. The albedo map is derived from very high spatial resolution laser measurements (~10s of meters), while the element maps were derived from gamma-ray measurements where the intrinsic spatial resolution is ~45 km. Accordingly, we perform the smoothing in order to make the albedo maps of comparable resolution to the element maps and remove the spatial resolution difference. By doing this, we will make the data more comparable for training and prediction. Moreover, we will be able to build a "resolution-tolerant" model, which will be helpful to minimize potential artifacts that arise from the resolution effects since the ultimate goal is to identify any possible resolution artifacts.

Figure 1 - Original (in the left) and Blurred (in the right) images of the Moon

Moreover, we created and implemented an adaptive Gaussian blur and compared it against the standard blurring. In this task, the choice of the variance/covariance-matrix of the gaussian filter was challenging since it is application dependent. Accordingly, next, we present the difference between the adaptive and the standard filter and additionally a map of how much sigma is applied to each pixel of the image.

Figure 2 - The difference between the adaptive and the standard blurring

Figure 3 - Maps of the amount of sigma applied to each pixel of the image

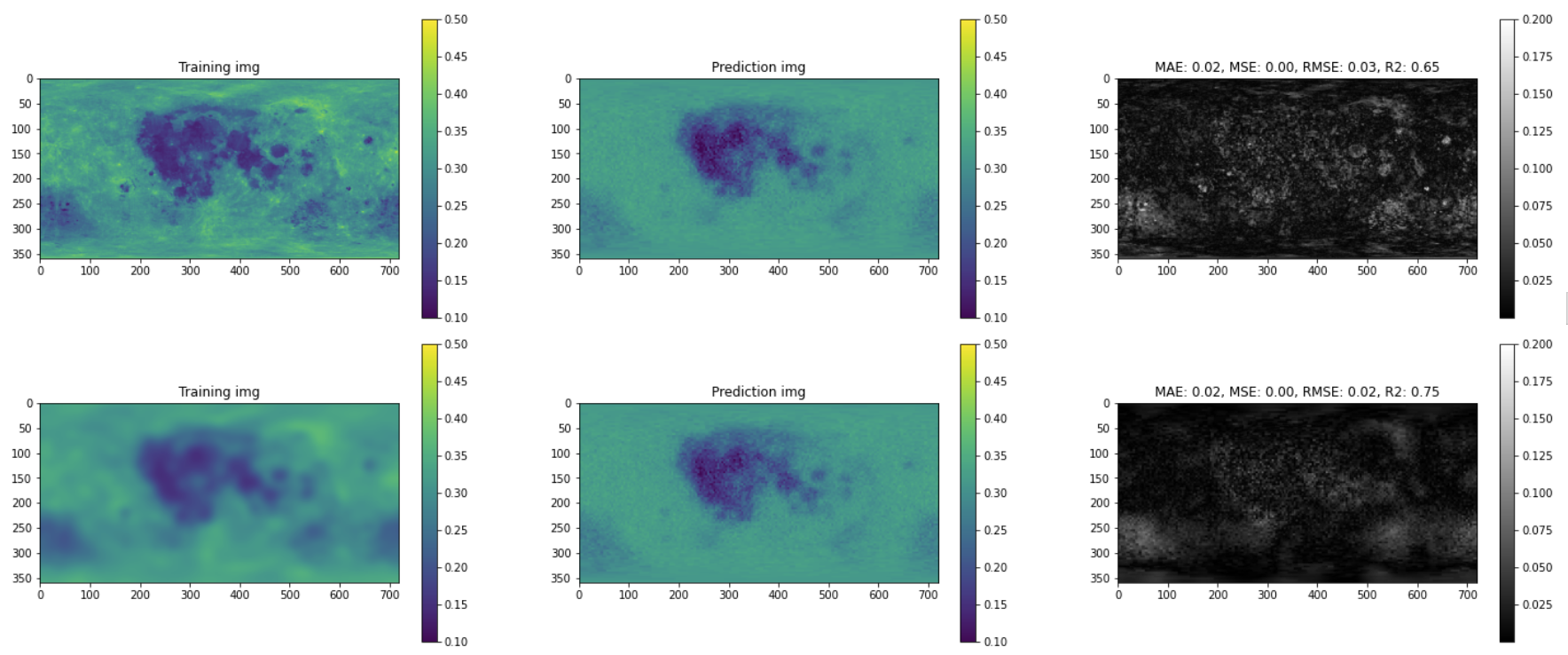

Additionally, we compared R2 score and root-mean-square error for each blurring configuration presented in Figure 4.

Figure 4 - R2 score and root-mean-square error for each blurring configuration

Based on these results, we chose the best values of sigma = 9 and kSize = (0,0) for the future experiments. Finally, we generate predicted images based on the ML model in both - the original Moon and the blurred version of it.

Figure 5 - Predicted images in the original and blurred Moon

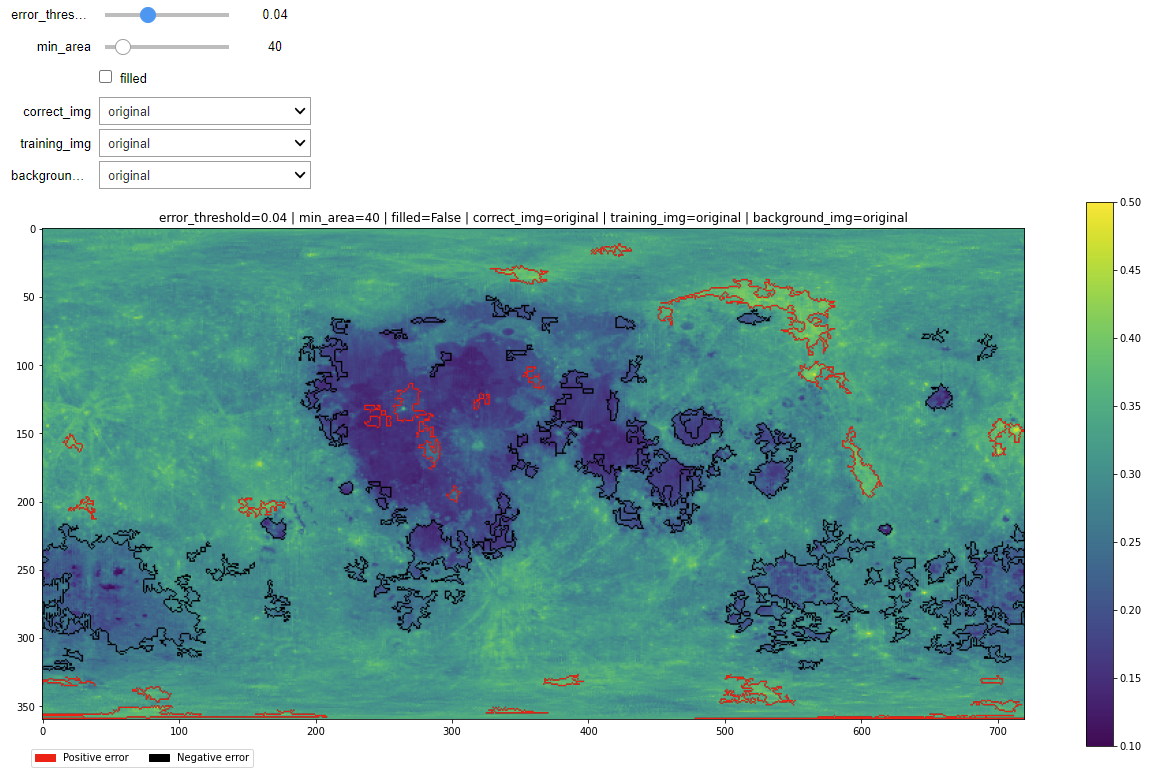

Finally, we created an interactive analyzer that displays contours around regions of pixels whose error value is higher than error_threshold. These contours are red if the error is positive and black if negative. Note that error is computed as (correct_img - predicted_img). Where predicted_img is generated by the model after being trained on training_img.

- mask_threshold: Only consider those pixels whose prediction error is higher than this.

- min_area: Discard contours whose area is smaller than this.

- filled: Fills the contours.

- correct_img: Image to be used as the correct one for computing the error.

- training_img: Image to be used to train the ML model.

- background_img: Image to be displayed in the background. If you select training_img it will display the image specified in the parameter "training_img".

Figure 6 - An example of the interactive analyzer (error threshold = 0.04, minimal area = 40, correct image = original, training image = original)

Figure 7 - An example of the interactive analyzer (error threshold = 0.04, minimal area = 100, correct image = blurred, training image = blurred)

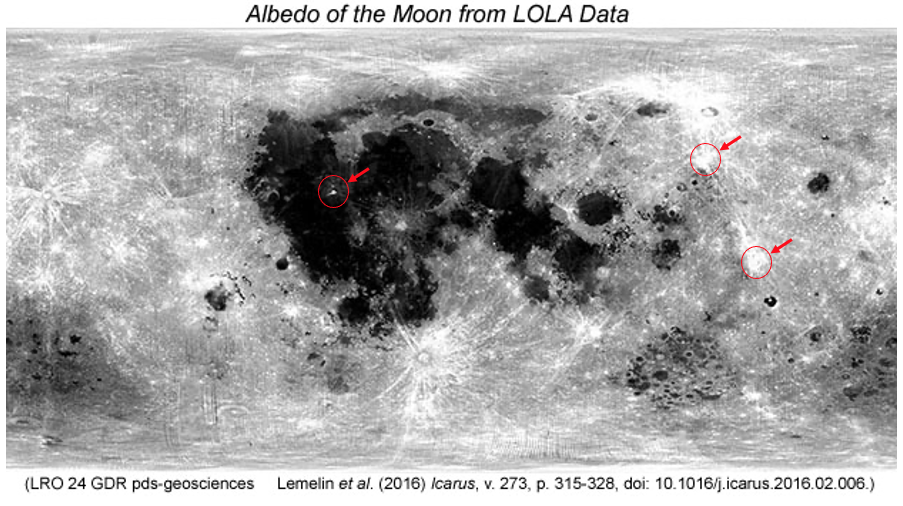

Optical maturity measures how long material has been sitting on the lunar surface, exposed to the harsh space environment. Space exposure can, among other things, darken a material. So bright material may simply be young. But it isn't a one-to-one relationship between optical albedo and optical maturity since less iron also makes things brighter.

The Lunar Orbiter Laser Altimeter (LOLA) is an instrument on the payload of NASA's Lunar Reconnaissance Orbiter spacecraft (LRO) [1], which was designed to measure the shape of the Moon by measuring precisely the range from the spacecraft to the lunar surface, and incorporating precision orbit determination of LRO, referencing surface ranges to the Moon's center of mass. LOLA has five beams and operates at 28 Hz, with a nominal accuracy of 10 cm. Its primary objective is to produce a global geodetic grid for the Moon to which all other observations can be precisely referenced.

Next, we present an optical albedo map (top) and an optical maturity map (partial surface coverage). Optical maturity is derived from optical spectra.

Figure 8 - An optical albedo map (top)

Figure 9 - An optical maturity map (partial surface coverage)

From Figure 9, we can notice that the dark mare is absent, and this fact was expected since here, the track composition is not taken into account. This breakdown between composition and optical maturity allows us to conclude that some of the represented regions indeed have low optical maturity, which we highlight in the following Figure 10.

Figure 10 - Potential light regions explained by low optical maturity

During this project, we aimed to predict the albedo based on the relationships between chemical elements composing the surface of the Moon. The challenge consisted of the fact that the existing datasets were incomplete. In this sense, we explored the effect of blurring the images that we had since the albedo map is derived from very high spatial resolution laser measurements and the element maps were derived from gamma-ray measurements. By making the albedo maps of comparable resolution to the element maps and removing the spatial resolution difference, we made the data more comparable for training and prediction. We achieved better results that were helpful to minimize potential artifacts that arise from the resolution effects. It also served as a base for building an interactive tool for analyzing the Moon and identifying possible resolution artifacts.

As a part of an additional experiment, we made a prediction including the surrounding pixels. We achieved slightly better results, but more exploration is needed on the methods that could be used in order to implement this feature and to prove that this setting would benefit the overall results. Moreover, we see potential in exploring the opportunities provided by Toolkit for Multivariate Data Analysis with ROOT and its features applied to high-energy particle physics.

-

[1] Chin, G., Brylow, S., Foote, M., Garvin, J., Kasper, J., Keller, J., ... & Zuber, M. (2007). Lunar reconnaissance orbiter overview: Theáinstrument suite and mission. Space Science Reviews, 129(4), 391-419.

-

[2] Smith, D. E., Zuber, M. T., Jackson, G. B., Cavanaugh, J. F., Neumann, G. A., Riris, H., ... & Zagwodzki, T. W. (2010). The lunar orbiter laser altimeter investigation on the lunar reconnaissance orbiter mission. Space science reviews, 150(1), 209-241.

Sir,

I am Pratyaksh Raj an undergraduate IT student from MIT Muzaffarpur

I have gone through this Machine Learning Model for the Planetary

Albedo Project and am very much interested to contribute.

I have worked on a Machine learning model for predicting Real Estate price on given a features.

As looking forward to GSoC 23 I want to be the part of ML4SCI Just need your guidance .