-

-

Save MichelleDalalJian/453c68e7fde2b2996c8b598c988c09d3 to your computer and use it in GitHub Desktop.

| from bs4 import BeautifulSoup | |

| import urllib.request, urllib.parse, urllib.error | |

| import ssl | |

| import re | |

| ctx = ssl.create_default_context() | |

| ctx.check_hostname = False | |

| ctx.verify_mode = ssl.CERT_NONE | |

| url = "http://py4e-data.dr-chuck.net/known_by_Bryce.html" | |

| #to repeat 7 times# | |

| for i in range(7): | |

| html = urllib.request.urlopen(url, context=ctx).read() | |

| soup = BeautifulSoup(html, 'html.parser') | |

| tags = soup('a') | |

| count = 0 | |

| for tag in tags: | |

| count = count +1 | |

| #make it stop at position 3# | |

| if count>18: | |

| break | |

| url = tag.get('href', None) | |

| print(url) |

The instructions for this assignment were poorly written and vague. Thank you for the help in clarifying the assignment a bit.

In case of some people if the above code does not work, try the following

import urllib.request, urllib.parse, urllib.error

from bs4 import BeautifulSoup

url = input('Enter URL - ')

html = urllib.request.urlopen(url).read()

soup = BeautifulSoup(html, 'html.parser')

Find all the tags with class="comments"

span_tags = soup.find_all('span', class_='comments')

count = 0

total_sum = 0

for span in span_tags:

# Extract the text content of the span tag and convert to an integer

count += 1

total_sum += int(span.contents[0])

print("Count", count)

print("Sum", total_sum)

Here's how the code works:

It takes the URL as input from the user.

It retrieves the HTML content from the specified URL using urllib.request.urlopen.

It uses BeautifulSoup to parse the HTML and find all the tags with the class "comments" using soup.find_all.

It initializes two variables count and total_sum to keep track of the number of comments and the sum of the comments, respectively.

It iterates through each tag, converts the text content of the tag to an integer using int(span.contents[0]), and adds it to the total_sum.

Finally, it prints the total count and sum of the comments.

When you run the script, it will prompt you to enter the URL (e.g., http://py4e-data.dr-chuck.net/comments_42.html) and then display the count and sum of the comments in the given URL.

Start at: http://py4e-data.dr-chuck.net/known_by_Kedrick.html

Find the link at position 18 (the first name is 1). Follow that link. Repeat this process 7 times. The answer is the last name that you retrieve.

Hint: The first character of the name of the last page that you will load is: L

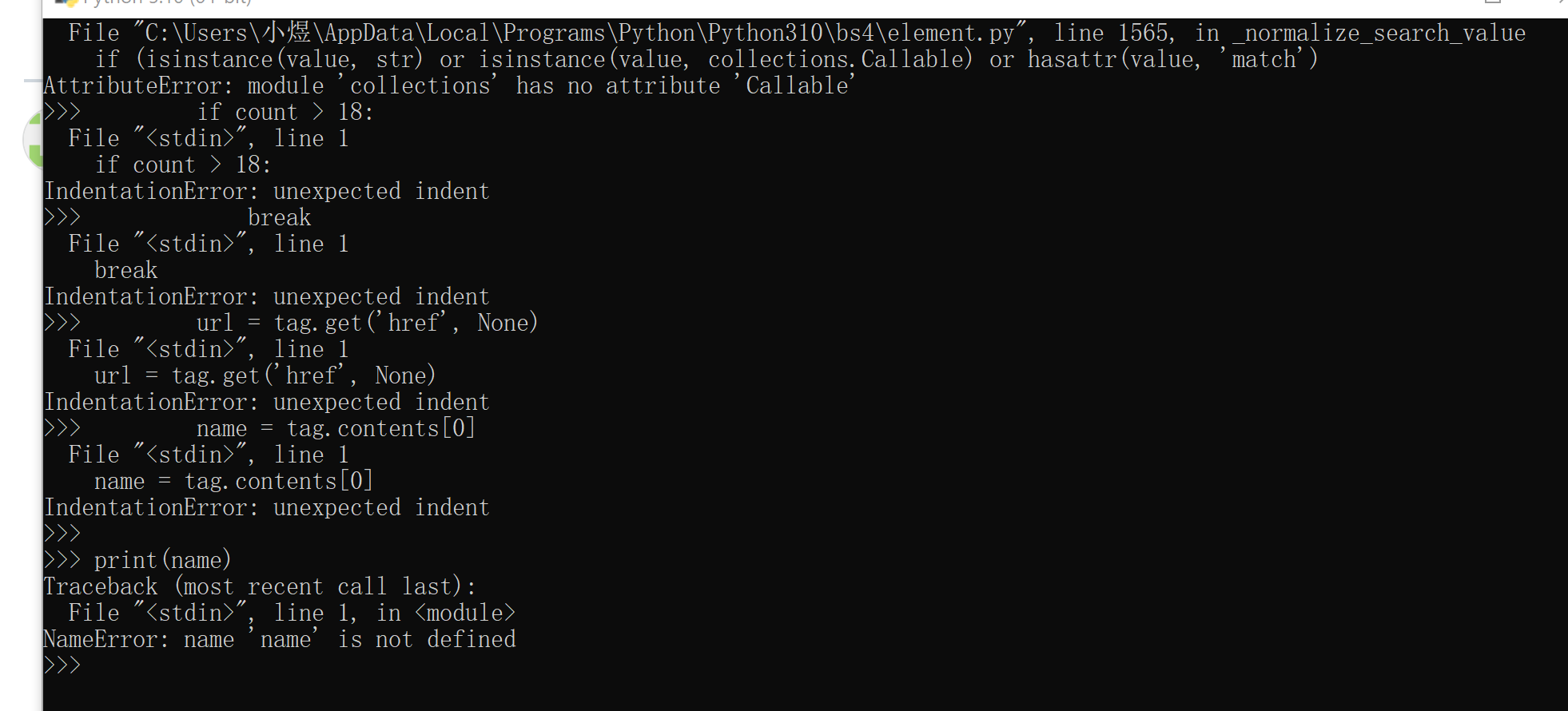

please help me, I don't know what the problem is!!!! here is my code:

import urllib.request, urllib.parse, urllib.error

import ssl

from bs4 import BeautifulSoup

ctx = ssl.create_default_context()

ctx.check_hostname = False

ctx.verify_mode = ssl.CERT_NONE

url = "http://py4e-data.dr-chuck.net/known_by_Kedrick.html"

for i in range(7):

html = urllib.request.urlopen(url, context=ctx).read()

soup = BeautifulSoup(html, 'html.parser')

tags = soup('a')

count = 0

for tag in tags:

count = count +1

print(name)