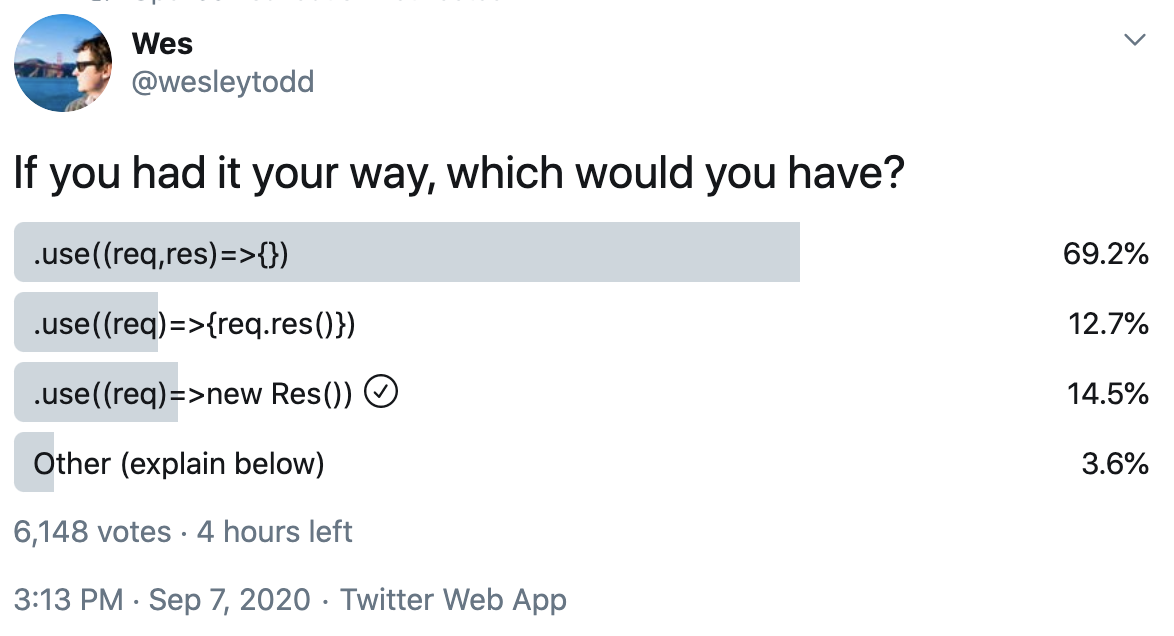

I saw this poll on Twitter earlier and was surprised at the result, which at the time of writing overwhelmingly favours option 1:

I've always been of the opinion that the (req, res, next) => {} API is the worst of all possible worlds, so one of two things is happening:

- I'm an idiot with bad opinions (very possibly!)

- People like familiarity

It's bad for composition in two ways. Firstly, for all but the most trivial handlers, you have to awkwardly pass the res object around:

app.use((req, res, next) => {

if (some_condition_is_met(req)) {

return render_view(req, res);

}

next();

})Secondly, combining middleware often involves monkey-patching the res object:

function log(req, res, next) {

const { writeHead } = res;

const start = Date.now();

let details;

res.writeHead = (status, message, headers) => {

if (!headers && typeof message !== 'string') {

headers = message;

message = '';

}

details = { status, headers };

writeHead.call(res, status, message, headers);

};

res.on('finish', () => {

console.log(`${req.method} ${req.url} (${Date.now() - start}ms): ${details.status} ${JSON.stringify(details.headers)}`);

});

next();

}

app.use(log).use((req, res, next) => {...});Monkey-patching objects belonging to the standard library is no more advisable here than it was in MooTools, but it's endemic in the ecosystem around Node servers.

In addition, because the built-in res object makes it difficult to do something as straightforward as responding with some JSON, and because send(res, data) is awkward, Express apps use a superclass of http.ServerResponse that adds a res.send(data) method among other things. I'm really not a fan of this pattern. Libraries shouldn't (but seemingly sometimes do) assume that these extra methods exist, making it harder to combine logic from different places.

Passing the res object around is reminiscent of a pattern that used to be extremely prevalent in Node apps:

function do_something(foo, cb) {

if (!is_valid(foo)) {

return cb(new Error('Invalid foo!'));

}

do_something_with_validated_foo(foo, cb);

}

do_something({...}, (err, result) => {

if (err) throw err;

console.log(result);

});Nowadays the ergonomomics around asynchronicity are much better thanks to async/await, which means we can use a more natural approach: the return keyword.

function do_something(foo) {

if (!is_valid(foo)) {

throw new Error('Invalid foo!');

}

return do_something_with_valid_foo(foo);

}

console.log(await do_something({...}));Conceptually, a response to an HTTP request is basically the same thing as a value returned from a function. So why don't we model it as such?

app.use(req => {

if (some_condition_is_met(req)) {

return render_view(req);

}

// no returned object, implicit next()

});Here, the returned value could be a new Response(...) where Response is some built-in object, or it could be something more straightforward:

{

status: 200,

headers: {

'Content-Type': 'text/html',

'Content-Length': 21

},

body: '<h1>Hello world!</h1>'

}(body could also be a Buffer or a Stream or a Promise, perhaps.)

This pattern lends itself to composition:

const respond = (body, headers, status = 200) => ({

body,

status,

headers: Object.assign({ 'Content-Length': body.length }, headers)

});

const json = obj => respond(JSON.stringify(obj), {

'Content-Type': 'application/json'

});

app.use(() => json({

answer: 42

}));Middlewares can be composed the way Koa does it:

const wrap = stream => new Promise((fulfil, reject) => {

stream.on('finish', () => fulfil());

stream.on('error', reject);

});

async function log(req, next) {

const start = Date.now();

const res = await next();

await res.body instanceof stream.Readable

? wrap(res.body)

: res.body;

console.log(`${req.method} ${req.url} (${Date.now() - start}ms): ${res.status} ${JSON.stringify(res.headers)}`);

return response;

}

app.use(log).use((req, next) => ({...}));It's likely that I've overlooked some crucial constraints. But if we're looking at evolving the built-in Node HTTP APIs, I hope we can take this rare opportunity to do so without being beholden to the way we do things now.

I felt exactly the same, and in working on FAB I've ended up having to build a whole middleware API which... well I'm kinda the only person that's really dug into it so this is very much "Glen's first go at this". It's not really documented anywhere so I'll post a summary here first then come back to make some comparisons at the end.

Note: FAB's have one added complexity over the actual middleware API, by the way, which is that a FAB is a compilation of a series of plugins, not a straight NodeJS file. So you kinda don't have a first-class programmatic API into the "middleware" outside of writing a plugin file. So keep that in mind...

The "RequestResponder"

This is basically middleware but I didn't want to invoke the

(req,res,next)idea so I called itResponderinstead. I'll use the FAB typedefs to explain:Note:

Request,Response,Urlare all as defined in the Fetch API. An aside:cross-fetchhas the best Typescript types for mocking these objects out in the browser (i.e. best compatibility with the libdomin.tsconfig)The most common of the return types is

Response | undefined, so you get functions like this:A couple of things:

async, even if it does nothing asynchronous at all. I've seen people hyper-optimise the event loop and avoid calling.then()on pre-resolved promise chains but honestly, we're talking about HTTP latencies here, one extra event loop for calling a promise isn't going to be detectably slow. Might be wrong there...undefinedis how you say "I don't care about this request". That's effectively the same as callingnext()but now it can be an early-return. The above example works great if you useif (x) return undefinedinstead. I'll do that for the remaining examples...urlis a first-class parameter to the responder. Otherwise 90% of responders would have to start withconst url = new URL(request.url)Returning a

RequestThis might not be that applicable outside of FABs, but something pretty common is proxying a request somewhere. For implementation reasons (i.e. some hosting restrictions) we can't always proxy the full request through the server, so we came up with this API:

Turns out, it's super handy! We actually broke compatibility with the spec and allow relative URLs here, so

new Request('/over/here.instead')gets understood by whatever's hosting the FAB. It's up to the hosting runtime to either perform afetchand forward the response or construct whatever the hosting platform needs to do to create a proxy request (looking at you, Lambda@Edge).If you don't have the ability to return a

Requestyou can still keep the code super clean, it's just a bit less flexible where the code runs:FabResponderArgsSo this is an object of:

{ request, url, context, cookies }(plussettingswhich is FAB-specific). A couple of points:requestis immutable (in theory). I only pass clones of the originalrequestobject in, to avoid middlewares using it as a lazy way of passing values around. Instead, there's two explicit APIs for middlewares to talk to each other, thereplaceRequestDirective(which I'll come back to) and...contextis a{[key: string]: any}object which any middleware can read/write to freely. It's the way middlewares are supposed to store information for future middlewares to use.url, as mentioned is anew URL(request.url)which is provided for conveniencecookieswas originally going to be a part ofcontext, but I want the FAB responders to feel higher-level and so having them pre-parsed (and immutable, once parsed) seemed to just make sense. I want to make this lazy in future, though, so if you never check the cookies you never even parse the headerssettings, as mentioned, is about being able to reuse FABs in different environments without recompiling or depending onprocess.env. It's described here and is probably not relevant to this discussionDirectivesThe final piece of the API is the

Directive, which at the moment has two options, but is intended to grow as I learn more:replaceRequestreplaceRequestis the counterpart to making therequestimmutable. Instead of doingreq.url = 'https://somewhere.else/', you can do:This then passes down the chain as normal, so anything that's operating on a purely HTTP basis can do so without any coupling to the later responders.

interceptResponseOne of the things I loved about Rack's stack-based middleware composition was that a middleware could just as easily transform the response on the way out as the request on the way in. So I built it for FABs:

You don't have to completely replace the response, you can modify it (but you still need to return it), for example setting a header.

Routing

Something that I've removed from the examples here is FAB's routing layer, which is just built on top of

path-to-regexp. There are a few examples here but in brief, it looks like this:We also add a

Router.interceptResponsealias as a shorthand when all you want to do is piggyback on every outgoing request, a la@fab/plugin-add-fab-idSo, contrasting how FABs work with your suggestions, there's a couple of points worth making:

For me, I'm trying to provide full functionality with the highest-level API possible, without making things too limited. When combined with

Router.onfor simple routing, it's extremely concise! But it's really designed with the goals and audience of FABs in mind, not a general-purpose server responses (although I think it could be used for that too!).Also, using the Fetch standard is a blessing and a curse. It makes the server-side code look exactly like some browser code or a serviceworker, which is great for consistency, but it makes it a bit awkward to do things like clone a Request with a changed URL. However, it's a legit standard, so building helper utilities on top might be the best way to get the ergonomics you want, rather than trying to define an arbitrary object.

I explicitly shied away from

const res = await next()because I didn't want every plugin to have to worry about calling the next one, and because I wanted to do things like makingreplaceRequestexplicit rather than each plugin having total control on what gets passed down. Very much a personal stylistic choice though.Very interested to hear what you think! But yeah, when faced with the choice of adopting the

(req, res, next)API or inventing my own, I definitely went the other way. I've been super happy with how this is all coming together, hopefully there's something in there of interest!