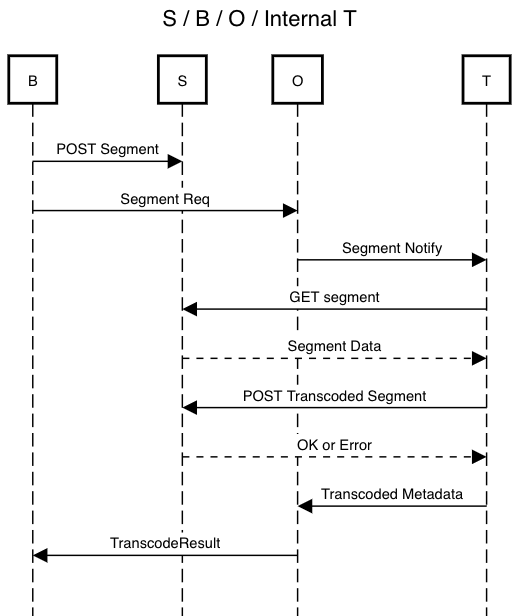

This describes one approach to splitting up the transcoder and orchestrator, and the network protocol involved in the first stage of the split. Please refer to the high-level overview for a more detailed explanation of the reasoning behind these choices. Feedback welcome!

-

Internal Transcoder A trusted transcoder under the orchestrator's control.

-

External Transcoder A untrusted transcoder not under the orchestrator's control.

-

B Broadcaster

-

O Orchestrator

-

T Transcoder, either internal or external

-

S Object store. May be on the orchestrator itself.

Thanks Philipp for the BOTS acronym!

The stages are designed to work around the approximate timelines for Livepeer's other technology efforts, eg non-deterministic verification and masternodes.

- Core Networking

- Implement the O/T split using internal Ts and the current x264 encoder.

- Use sequential fallbacks for simplicity and cost savings.

- Parallelize transcoding of segment profiles

- O content bypass via S

- Trusted Races

- Parallel redundancy via trusted races with internal T and x264

- Non-deterministic verification and GPU encoding support

- Untrusted Races

- Discovery, reputation, scheduling, payments, etc to fully support external T

The remainder of this document focuses on outlining the network spec for use with internal T. Certain fields may be added to these messages in order to support races and external T, but the basic flow for handling segments should remain the same.

Note that most of these features may be further broken down and done in their own time. For example, the initial networking can be built and released, while leaving for later the parallelism or S-enabled content bypass.

Note that the S here may be on O itself, although this has tradeoffs of its own. See the sections on object store for details.

T initiates a connection to an O using a common shared secret. This connection should be persistent in order to receive notifications, and acts as a form of liveness check.

message RegisterTranscoder {

string secret = 1; // common shared secret

}message TranscoderRegistered {

}Sent by the orchestrator to the transcoder to notify of a new segment.

// Sent by the orchestrator to the transcoder to notify of a new segment

message NotifySegment {

SegData segData = 1; // seq/hash/sig, reused message type

TranscodedSegmentData segment = 2; // URL of segment, reused message type

repeated Profiles profiles = 3; // profile(s) to transcode

}T fetches the segment from S. S itself may be on O.

We might want to authenticate here.

T uploads the result directly to O.

Body should contain the segment data. Headers should have:

- Hash of the body

- Auth token, initially consisting of

HMAC(bodyHash, secret)

We may eventually want to replace the secret with a separate token that is returned with the TranscoderRegistered message.

Generally we want to minimize how much data we move over the network The use (or lack of) S affects the optimal flow.

For fully internal T, we might want both B/T to use S. Then orchestrator has no need to actually touch the content. O can bypass the content, and work only with metadata.

While races are slated for later, it is worth considering how races and object store interact.

With races, a broadcaster supplied S may incur additional costs for the broadcaster, depending on the orchestrator's internal topology. For example, transcoding a source into 3 versions, with a parallel redundancy factor of 3, will incur 9 downloads for that one segment. This may be surprising to a broadcaster that pays by the request and by the byte.

There is the question of where to send additional transcodes that are made for redundancy purposes. These segments probably should not go into the broadcaster's S, at least by default. The most future-proof way to address this seems to be to have T directly upload to the orchestrator. When transcoders become untrusted, the orchestrator needs access to the content in order to verify the work.

Having B/T upload to a orchestrator-supplied S may be optimal until there is full support for external transcoders. We may add this as an optional behavior after object store is fully integrated. The network protocol may change slightly; eg the results go straight into S while an additional gRPC notification message needs to be transmitted to the orchestrator containing the needed metadata (URL, hash, auth token).

There were a couple of references in the doc that (rather carelessly) referred to

Segment Resp; I've changed those toTranscodeResultfor clarity to match the actual type used in the current networking protocol.https://github.com/livepeer/go-livepeer/blob/135909c18703d406ae3a0ce35a58aa0152ed00f2/net/lp_rpc.proto#L88-L97

Actually, in current protocol between O -> B, the

TranscodeResultalways has URLs. So we're good in that respect.Yes, notification can occur in the response to the

POST Resultsthat T submits to O. That response was omitted in the diagram; it's been updated.Will object store be ready?

A case that just occurred to me where a direct upload might be better: one of the benefits of the current networking protocol is that it allows, on paper, for Os (as indicated by the ServiceURI) to redirect B to geographically proximate instances. (Let's call these PoPs, points of presence.) With nearby PoPs, and nearby Ts, it might make more sense for B to do a direct upload rather than use a S3 bucket that may be in a different region. But that's probably a ways off.

Yes, we can add that. I probably need to spec out this message as well.

Yep,

TranscodeResultalready has an error field. However, if an O refuses to do service, then that rejection should occur in the job negotiation/setup phase (GetTranscoder/TranscoderInfounder the current protocol, which was omitted here). If B tries to send video to any random O, it'd fail anyway because B wouldn't have the credentials that it acquires during job setup.