Last active

November 6, 2022 15:57

-

-

Save mikesparr/d991c4827d4f0438d9107b1050eebaa4 to your computer and use it in GitHub Desktop.

Example network load balancer and internal TCP load balancer on Google Cloud Platform fronting an instance group

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| #!/usr/bin/env bash | |

| ##################################################################### | |

| # REFERENCES | |

| # - https://cloud.google.com/compute/docs/instance-templates/create-instance-templates | |

| # - https://cloud.google.com/compute/docs/metadata/setting-custom-metadata | |

| # - https://cloud.google.com/compute/docs/instances/startup-scripts/linux#gcloud_2 | |

| # - https://cloud.google.com/compute/docs/instances/startup-scripts/linux#accessing-metadata | |

| # - https://cloud.google.com/compute/docs/instance-groups/distributing-instances-with-regional-instance-groups | |

| # - https://cloud.google.com/load-balancing/docs/health-checks#optional-flags-hc-protocol-ssl-tcp | |

| # - https://cloud.google.com/compute/docs/autoscaler/scaling-cloud-monitoring-metrics#examples_for_autoscaling_based_on_metrics | |

| # - https://cloud.google.com/compute/docs/ip-addresses/reserve-static-internal-ip-address | |

| # - https://cloud.google.com/load-balancing/docs/internal/setting-up-internal | |

| # - https://cloud.google.com/load-balancing/docs/network/setting-up-network-backend-service | |

| ##################################################################### | |

| export PROJECT_ID=$(gcloud config get-value project) | |

| export PROJECT_USER=$(gcloud config get-value core/account) # set current user | |

| export PROJECT_NUMBER=$(gcloud projects describe $PROJECT_ID --format="value(projectNumber)") | |

| export IDNS=${PROJECT_ID}.svc.id.goog # workflow identity domain | |

| export GCP_REGION="us-east1" # CHANGEME (OPT) | |

| export GCP_ZONE="us-east1-b" # CHANGEME (OPT) | |

| export NETWORK_NAME="default" # CHANGEME (OPT or Override) | |

| export DOMAIN="example.com" #CHANGEME (OPT) | |

| # enable apis | |

| gcloud services enable compute.googleapis.com \ | |

| storage.googleapis.com | |

| # configure gcloud sdk | |

| gcloud config set compute/region $GCP_REGION | |

| gcloud config set compute/zone $GCP_ZONE | |

| ########################################################### | |

| # STORAGE BUCKET | |

| ########################################################### | |

| export BUCKET_NAME="example-bucket-with-scripts-1" | |

| # create storage bucket (using new gcloud storage command instead of gsutil) | |

| gcloud storage buckets create gs://$BUCKET_NAME/ \ | |

| --default-storage-class=standard \ | |

| --location=$GCP_REGION \ | |

| --uniform-bucket-level-access | |

| ########################################################## | |

| # STARTUP SCRIPT | |

| ########################################################## | |

| export STARTUP_SCRIPT_FILE_NAME="startup.sh" | |

| export METADATA_KEY="ipaddr" | |

| # install web server, customize homepage with metadata server value | |

| cat > $STARTUP_SCRIPT_FILE_NAME << EOF | |

| #! /bin/bash | |

| METADATA_VALUE="\$(curl -H "Metadata-Flavor: Google" \\ | |

| http://metadata.google.internal/computeMetadata/v1/instance/attributes/$METADATA_KEY)" | |

| VM_HOSTNAME="\$(curl -H "Metadata-Flavor: Google" \\ | |

| http://metadata.google.internal/computeMetadata/v1/instance/name)" | |

| apt update | |

| apt -y install apache2 | |

| cat > /var/www/html/index.html << EOF | |

| <html><head><title>HOST: \$VM_HOSTNAME - IP: \$METADATA_VALUE</title></head> | |

| <body><h1>\$VM_HOSTNAME</h1><p>The load balancer IP address from metadata is: \$METADATA_VALUE</p></body></html> | |

| EOF | |

| # upload file to storage bucket | |

| gcloud storage cp $STARTUP_SCRIPT_FILE_NAME gs://$BUCKET_NAME/$STARTUP_SCRIPT_FILE_NAME | |

| ########################################################### | |

| # NETWORKING | |

| ########################################################### | |

| export EXT_IP_NAME="public-lb-ip" | |

| export NETWORK_NAME="lb-network" | |

| export SUBNET_NAME="lb-subnet" | |

| export SUBNET_RANGE="10.1.2.0/24" | |

| export INT_IP_NAME="internal-lb-ip" | |

| export INT_IP="10.1.2.99" # arbitrary unused IP in range | |

| export CLOUD_ROUTER_NAME="router-1" | |

| export CLOUD_ROUTER_ASN="64523" | |

| export NAT_GW_NAME="nat-gateway-1" | |

| # create network | |

| gcloud compute networks create $NETWORK_NAME \ | |

| --subnet-mode=custom | |

| # create subnet | |

| gcloud compute networks subnets create $SUBNET_NAME \ | |

| --network=$NETWORK_NAME \ | |

| --range=$SUBNET_RANGE \ | |

| --region=$GCP_REGION | |

| # create static external IP for network load balancer | |

| gcloud compute addresses create $EXT_IP_NAME \ | |

| --region $GCP_REGION | |

| # TODO: point DNS A record to new IP | |

| sleep 10 # wait for it to provision | |

| export EXT_IP=$(gcloud compute addresses describe $EXT_IP_NAME --region $GCP_REGION --format="value(address)") | |

| echo "Add DNS A record to $DOMAIN with IP: $EXT_IP" | |

| # create static internal IP for the internal load balancer | |

| gcloud compute addresses create $INT_IP_NAME \ | |

| --region $GCP_REGION --subnet $SUBNET_NAME \ | |

| --addresses $INT_IP | |

| # create cloud router and nat gateway | |

| gcloud compute routers create $CLOUD_ROUTER_NAME \ | |

| --network $NETWORK_NAME \ | |

| --asn $CLOUD_ROUTER_ASN \ | |

| --region $GCP_REGION | |

| gcloud compute routers nats create $NAT_GW_NAME \ | |

| --router=$CLOUD_ROUTER_NAME \ | |

| --region=$GCP_REGION \ | |

| --auto-allocate-nat-external-ips \ | |

| --nat-all-subnet-ip-ranges \ | |

| --enable-logging | |

| # fwr - allow public traffic to network lb fronted instances | |

| gcloud compute firewall-rules create allow-network-lb-ipv4 \ | |

| --network=$NETWORK_NAME \ | |

| --target-tags=lb-tag \ | |

| --allow=tcp:80 \ | |

| --source-ranges=0.0.0.0/0 | |

| # fwr - allow internal network traffic | |

| gcloud compute firewall-rules create fw-allow-lb-access \ | |

| --network=$NETWORK_NAME \ | |

| --action=allow \ | |

| --direction=ingress \ | |

| --source-ranges=$SUBNET_RANGE \ | |

| --rules=tcp,udp,icmp | |

| # fwr - allow SSH | |

| gcloud compute firewall-rules create fw-allow-ssh \ | |

| --network=$NETWORK_NAME \ | |

| --action=allow \ | |

| --direction=ingress \ | |

| --target-tags=allow-ssh \ | |

| --rules=tcp:22 | |

| # fwr - allow health checks (they are only TCP) | |

| gcloud compute firewall-rules create fw-allow-health-check \ | |

| --network=$NETWORK_NAME \ | |

| --action=allow \ | |

| --direction=ingress \ | |

| --target-tags=allow-health-check \ | |

| --source-ranges=130.211.0.0/22,35.191.0.0/16 \ | |

| --rules=tcp | |

| # fwr - allow internal lb health checks | |

| gcloud compute firewall-rules create fw-allow-network-lb-health-check \ | |

| --network=$NETWORK_NAME \ | |

| --action=allow \ | |

| --direction=ingress \ | |

| --target-tags=allow-ilb-health-check \ | |

| --source-ranges=35.191.0.0/16,209.85.152.0/22,209.85.204.0/22 \ | |

| --rules=tcp | |

| ########################################################## | |

| # COMPUTE INSTANCE GROUP 1 (PUBLIC) | |

| ########################################################## | |

| export TEMPLATE_NAME_1="sbc-template" # session border controller | |

| export IG_NAME_1="sbc" | |

| # configure instance template with external IP using startup script | |

| gcloud compute instance-templates create $TEMPLATE_NAME_1 \ | |

| --machine-type=e2-standard-4 \ | |

| --image-family=debian-10 \ | |

| --image-project=debian-cloud \ | |

| --boot-disk-size=250GB \ | |

| --region=$GCP_REGION \ | |

| --subnet=$SUBNET_NAME \ | |

| --metadata=startup-script-url=gs://$BUCKET_NAME/$STARTUP_SCRIPT_FILE_NAME,$METADATA_KEY=$EXT_IP \ | |

| --labels=app=sbc,role=frontend \ | |

| --tags=sbc,lb-tag,allow-health-check,allow-ssh | |

| # create managed instance group | |

| gcloud compute instance-groups managed create $IG_NAME_1 \ | |

| --base-instance-name=$IG_NAME_1 \ | |

| --template=$TEMPLATE_NAME_1 \ | |

| --size=1 \ | |

| --region $GCP_REGION | |

| # configure autoscaler (can increase to 2 min for testing) | |

| gcloud beta compute instance-groups managed set-autoscaling $IG_NAME_1 \ | |

| --region $GCP_REGION \ | |

| --cool-down-period "60" \ | |

| --max-num-replicas "5" \ | |

| --min-num-replicas "1" \ | |

| --target-cpu-utilization "0.75" \ | |

| --mode "on" | |

| ########################################################## | |

| # COMPUTE INSTANCE GROUP 2 (PRIVATE) | |

| ########################################################## | |

| export TEMPLATE_NAME_2="media-template" # media server | |

| export IG_NAME_2="media" | |

| # configure instance template with only internal IP using startup script | |

| gcloud compute instance-templates create $TEMPLATE_NAME_2 \ | |

| --machine-type=e2-standard-4 \ | |

| --image-family=debian-10 \ | |

| --image-project=debian-cloud \ | |

| --boot-disk-size=250GB \ | |

| --region=$GCP_REGION \ | |

| --subnet=$SUBNET_NAME \ | |

| --metadata=startup-script-url=gs://$BUCKET_NAME/$STARTUP_SCRIPT_FILE_NAME,$METADATA_KEY=$INT_IP \ | |

| --no-address \ | |

| --labels=app=media,role=backend \ | |

| --tags=media,allow-health-check,allow-ilb-health-check | |

| # create managed instance group | |

| gcloud compute instance-groups managed create $IG_NAME_2 \ | |

| --base-instance-name=$IG_NAME_2 \ | |

| --template=$TEMPLATE_NAME_2 \ | |

| --size=1 \ | |

| --region $GCP_REGION | |

| # NOTE: see References links at top for more autoscaling options | |

| gcloud beta compute instance-groups managed set-autoscaling $IG_NAME_2 \ | |

| --region $GCP_REGION \ | |

| --cool-down-period "60" \ | |

| --max-num-replicas "5" \ | |

| --min-num-replicas "1" \ | |

| --target-cpu-utilization "0.60" \ | |

| --mode "on" | |

| ########################################################## | |

| # Load Balancer (Network - TCP/UDP) | |

| ########################################################## | |

| export ELB_HEALTH_CHECK_NAME="elb-hc-tcp" | |

| export ELB_BACKEND_SVC_NAME="elb-backend" | |

| export ELB_FORWARDING_RULE_NAME="elb-fr" | |

| # create health check | |

| gcloud compute health-checks create tcp $ELB_HEALTH_CHECK_NAME \ | |

| --region $GCP_REGION \ | |

| --port 80 | |

| # create backend service | |

| gcloud compute backend-services create $ELB_BACKEND_SVC_NAME \ | |

| --protocol TCP \ | |

| --health-checks $ELB_HEALTH_CHECK_NAME \ | |

| --health-checks-region $GCP_REGION \ | |

| --region $GCP_REGION \ | |

| --session-affinity CLIENT_IP | |

| # add instance group to backend | |

| gcloud compute backend-services add-backend $ELB_BACKEND_SVC_NAME \ | |

| --instance-group $IG_NAME_1 \ | |

| --instance-group-region $GCP_REGION \ | |

| --region $GCP_REGION | |

| # create forwarding rule | |

| gcloud compute forwarding-rules create $ELB_FORWARDING_RULE_NAME \ | |

| --load-balancing-scheme EXTERNAL \ | |

| --region $GCP_REGION \ | |

| --ports 80 \ | |

| --address $EXT_IP_NAME \ | |

| --backend-service $ELB_BACKEND_SVC_NAME | |

| ########################################################## | |

| # Load Balancer (Internal - TCP/UDP) points to IG 2 | |

| ########################################################## | |

| export ILB_HEALTH_CHECK_NAME="ilb-hc-http" | |

| export ILB_BACKEND_SVC_NAME="ilb-backend" | |

| export ILB_FORWARDING_RULE_NAME="ilb-fr" | |

| # create health check | |

| gcloud compute health-checks create http $ILB_HEALTH_CHECK_NAME \ | |

| --region=$GCP_REGION \ | |

| --port=80 | |

| # create backend service | |

| gcloud compute backend-services create $ILB_BACKEND_SVC_NAME \ | |

| --load-balancing-scheme=internal \ | |

| --protocol=tcp \ | |

| --region=$GCP_REGION \ | |

| --health-checks=$ILB_HEALTH_CHECK_NAME \ | |

| --health-checks-region=$GCP_REGION \ | |

| --session-affinity CLIENT_IP | |

| # add instance group to backend (can point to either IG as needed) | |

| gcloud compute backend-services add-backend $ILB_BACKEND_SVC_NAME \ | |

| --instance-group=$IG_NAME_2 \ | |

| --instance-group-region $GCP_REGION \ | |

| --region=$GCP_REGION | |

| # create forwarding rule (with internal static IP) | |

| gcloud compute forwarding-rules create $ILB_FORWARDING_RULE_NAME \ | |

| --region=$GCP_REGION \ | |

| --load-balancing-scheme=internal \ | |

| --network=$NETWORK_NAME \ | |

| --subnet=$SUBNET_NAME \ | |

| --address=$INT_IP \ | |

| --ip-protocol=TCP \ | |

| --ports=80,8008,8080,8088 \ | |

| --backend-service=$ILB_BACKEND_SVC_NAME \ | |

| --backend-service-region=$GCP_REGION |

Results

Everything spins up, health checks works, SSH to public instances work (didn't allow for internal but could use IAP for those), and load balancing and session affinity (optional) works.

Next steps

- harden compute instance and create custom image

- customize

startup scriptandmetadatato suit needs - optionally use custom image for instance templates

- use custom service accounts (vs default) for instance templates

- add IAM roles as needed and test

- enable

OS Loginviametadataor atproject level

- use custom service accounts (vs default) for instance templates

- optionally add IAP tunneling access to internal instances

- IAM roles and firewall rules

- optionally add Cloud DNS and internal DNS references

- if minimum latency required, may run zonal migs with primary/failover to another zone

Screenshots

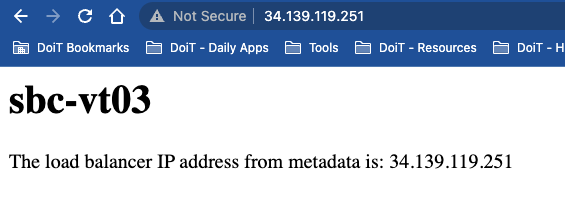

- Working web page served up by instance, displaying values from

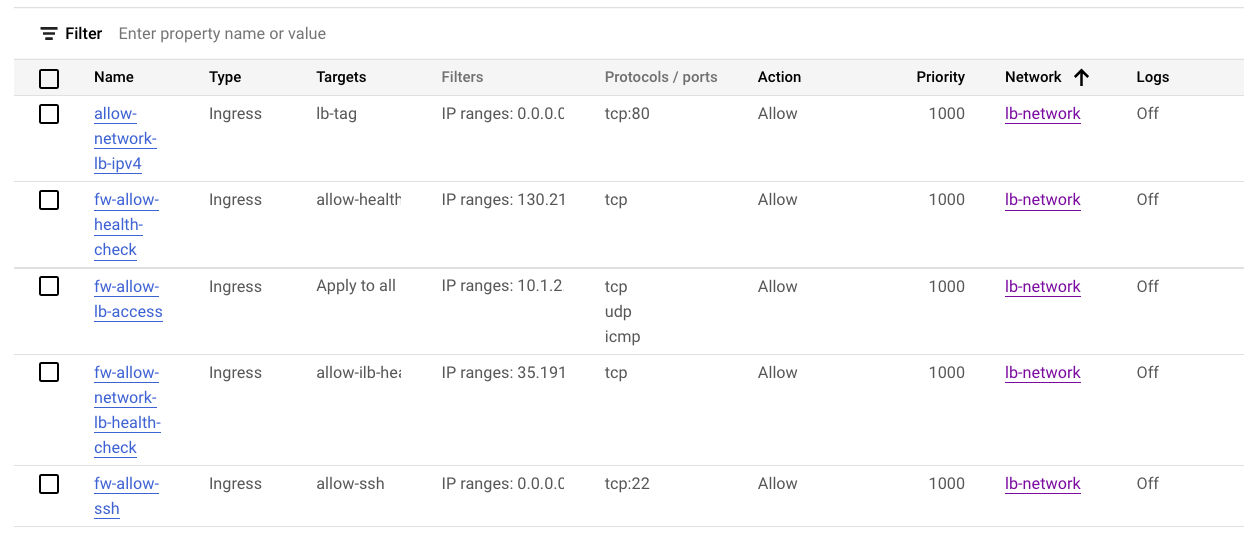

metadata serverconfigured during startup - Firewall Rules

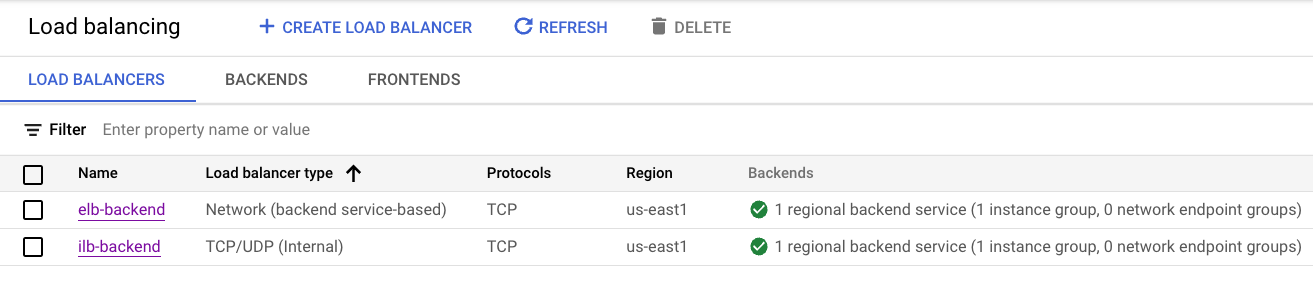

- Load Balancers

- Instance Templates

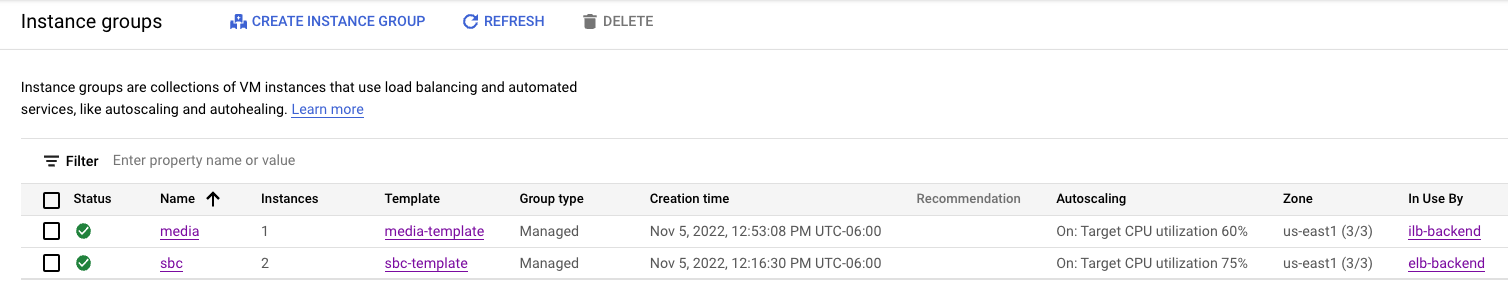

- Instance Groups

- VM Instances (note sbc with external IP; media without; also instances scale out to different zones)

- Instance Groups

- Instance Templates

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Overview

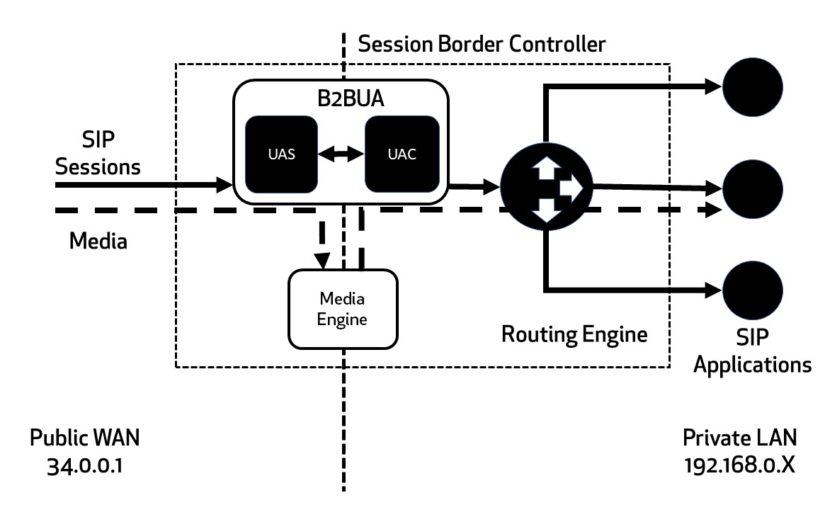

This experiment illustrates how you may set up load balancing (pass-through) for telco software, with an external network load balancer with static external IP with session border controller (SBC) front end, and then internal load balancer (pass-through) that a private instance group can reach.

NOTE: not working SIP server example

This experiment simply illustrates how to leverage Google Cloud Platform services for external and internal TCP (Network) load balancing between instance groups. Taking these "ingredients" should allow someone to bake their own hardened VM images, reference them in instance templates, and scale up a managed instance group (MIG).

Older SIP/FreeSwitch experiment

see the "References" links atop code snippet above and read how to configure each GCP server as needed

I based some of the design off this diagram below from SBC vs SIP article I found online

Architecture (on GCP)

Other considerations

If the example GCP architecture isn't exactly correct, the "ingredients" are there to configure as needed. For example, if there is an SBC that routes traffic to a group of media servers, and the media servers then reach the SIP servers, you may have multiple private managed instance groups and multiple internal load balancers. Simply copy the examples in this experiment as needed and change names, and potentially firewall rules as required.