This is a draft list of what we're thinking about measuring in Etsy's native apps.

Currently we're looking at how to measure these things with Espresso and Kif (or if each metric is even possible to measure in an automated way). We'd like to build internal dashboards and alerts around regressions in these metrics using automated tests. In the future, we'll want to measure most of these things with RUM too.

- App launch time - how long does it take between tapping the icon and being able to interact with the app?

- Time to complete critical flows - using automated testing, how long does it take a user to finish the checkout flow, etc.?

- Battery usage, including radio usage and GPS usage

- Peak memory allocation

- Frame rate - we need to figure out where we're dropping frames (and introducing scrolling jank). We should be able to dig into render, execute, and draw times.

- Memory leaks - using automated testing, can we find flows or actions that trigger a memory leak?

- An app version of Speed Index - visible completion of the above-the-fold screen over time.

- Time it takes for remote images to appear on the screen

- Time between tapping a link and being able to do something on the next screen

- Average time looking at spinners

- API performance

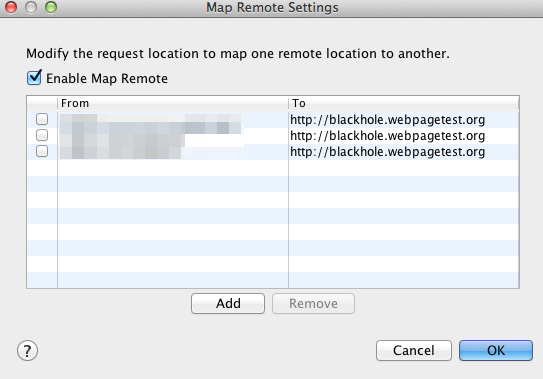

- Webview Performance

There is absolutely value in aligning web performance metrics with native app performance metrics. As a team of web performance engineers, it's been valuable having a mental model of human perception of speed, an understanding of networking, etc.

That being said, the stage that our team is in right now is attempting to, in an automated fashion, gather native app performance metrics. We've been able to wrap our heads around native enough to gather some performance benchmarks using the standard tools for Android and iOS. We've used our mental models of webperf to guide us so far. :) But unlike on the web, it seems like we're in a brave new world of measuring native app performance in an automated way. Like I said in the gist, we're hoping to alert on regressions and bring these metrics into dashboards so that we can, at a glance, understand the state of performance in our apps.

Guypo and Colt - can I volunteer you to write some articles on perf concepts and what's shared between apps and web? :D

In the meantime, I'd love to dig more into the actual implementation and what we should be collecting when we look at apps. To Colt's point in our email thread, we're a company in the trenches trying to figure out how to report on what our "system is doing, in a consumable way, rather than just generalizing the numeric output so that it can be shared across web/native." And we have our webperf mental models to guide us :)

In response to Guypo's smaller points:

Let me reframe the problem: we have a good grasp of how performance works on the web, and for humans. We've been able to apply that, at Etsy, to native apps - that's where the above list comes from. Once we get a canonical list in place (what am I missing that might be part of Guypo's first tier, "Conceptual perf metrics"?) then the team can work on gathering metrics in automated apps. But our problem right now is: can we measure these in an automated fashion? Can we report on these to help our product teams understand how their code changes impact the performance of the apps? I'd love to move the conversation (in this place, at least - I see tremendous value in more resources elsewhere for the venn diagrams in which native app and web perf overlap) toward concretely talking about the how.